Post 239: May 20 JDN 2458259

This post is one of those where I’m trying to sort out my own thoughts on an ongoing research project, so it’s going to be a bit more theoretical than most, but I’ll try to spare you the mathematical details.

People often change their minds about things; that should be obvious enough. (Maybe it’s not as obvious as it might be, as the brain tends to erase its prior beliefs as wastes of data storage space.)

Most of the ways we change our minds are fairly minor: We get corrected about Napoleon’s birthdate, or learn that George Washington never actually chopped down any cherry trees, or look up the actual weight of an average African elephant and are surprised.

Sometimes we change our minds in larger ways: We realize that global poverty and violence are actually declining, when we thought they were getting worse; or we learn that climate change is actually even more dangerous than we thought.

But occasionally, we change our minds in an even more fundamental way: We actually change what we care about. We convert to a new religion, or change political parties, or go to college, or just read some very compelling philosophy books, and come out of it with a whole new value system.

Often we don’t anticipate that our values are going to change. That is important and interesting in its own right, but I’m going to set it aside for now, and look at a different question: What about the cases where we know our values are going to change?

Can it ever be rational for someone to choose to adopt a new value system?

Yes, it can—and I can put quite tight constraints on precisely when.

Here’s the part where I hand-wave the math, but imagine for a moment there are only two goods in the world that anyone would care about. (This is obviously vastly oversimplified, but it’s easier to think in two dimensions to make the argument, and it generalizes to n dimensions easily from there.) Maybe you choose a job caring only about money and integrity, or design policy caring only about security and prosperity, or choose your diet caring only about health and deliciousness.

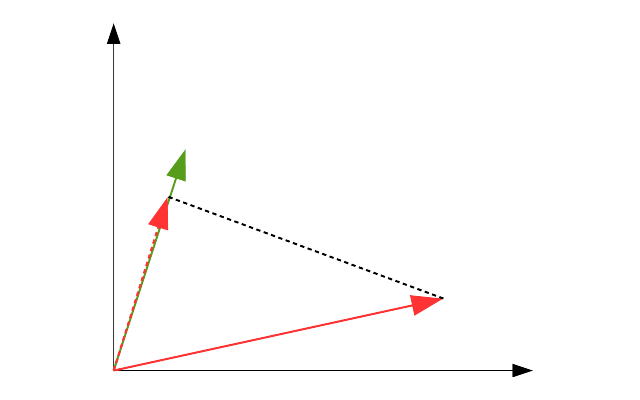

I can then represent your current state as a vector, a two dimensional object with a length and a direction. The length describes how happy you are with your current arrangement. The direction describes your values—the direction of the vector characterizes the trade-off in your mind of how much you care about each of the two goods. If your vector is pointed almost entirely parallel with health, you don’t much care about deliciousness. If it’s pointed mostly at integrity, money isn’t that important to you.

This diagram shows your current state as a green vector.

Now suppose you have the option of taking some action that will change your value system. If that’s all it would do and you know that, you wouldn’t accept it. You will be no better off, and your value system will be different, which is bad from your current perspective. So here, you would not choose to move to the red vector:

But suppose that the action would change your value system, and make you better off. Now the red vector is longer than the green vector. Should you choose the action?

It’s not obvious, right? From the perspective of your new self, you’ll definitely be better off, and that seems good. But your values will change, and maybe you’ll start caring about the wrong things.

I realized that the right question to ask is whether you’ll be better off from your current perspective. If you and your future self both agree that this is the best course of action, then you should take it.

The really cool part is that (hand-waving the math again) it’s possible to work this out as a projection of the new vector onto the old vector. A large change in values will be reflected as a large angle between the two vectors; to compensate for that you need a large change in length, reflecting a greater improvement in well-being.

If the projection of the new vector onto the old vector is longer than the old vector itself, you should accept the value change.

If the projection of the new vector onto the old vector is shorter than the old vector, you should not accept the value change.

This captures the trade-off between increased well-being and changing values in a single number. It fits the simple intuitions that being better off is good, and changing values more is bad—but more importantly, it gives us a way of directly comparing the two on the same scale.

This is a very simple model with some very profound implications. One is that certain value changes are impossible in a single step: If a value change would require you to take on values that are completely orthogonal or diametrically opposed to your own, no increase in well-being will be sufficient.

It doesn’t matter how long I make this red vector, the projection onto the green vector will always be zero. If all you care about is money, no amount of integrity will entice you to change.

But a value change that was impossible in a single step can be feasible, even easy, if conducted over a series of smaller steps. Here I’ve taken that same impossible transition, and broken it into five steps that now make it feasible. By offering a bit more money for more integrity, I’ve gradually weaned you into valuing integrity above all else:

This provides a formal justification for the intuitive sense many people have of a “moral slippery slope” (commonly regarded as a fallacy). If you make small concessions to an argument that end up changing your value system slightly, and continue to do so many times, you could end up with radically different beliefs at the end, even diametrically opposed to your original beliefs. Each step was rational at the time you took it, but because you changed yourself in the process, you ended up somewhere you would not have wanted to go.

This is not necessarily a bad thing, however. If the reason you made each of those changes was actually a good one—you were provided with compelling evidence and arguments to justify the new beliefs—then the whole transition does turn out to be a good thing, even though you wouldn’t have thought so at the time.

This also allows us to formalize the notion of “inferential distance”: the inferential distance is the number of steps of value change required to make someone understand your point of view. It’s a function of both the difference in values and the difference in well-being between their point of view and yours.

Another key insight is that if you want to persuade someone to change their mind, you need to do it slowly, with small changes repeated many times, and you need to benefit them at each step. You can only persuade someone to change their minds if they will end up better off than they were at each step.

Is this an endorsement of wishful thinking? Not if we define “well-being” in the proper way. It can make me better off in a deep sense to realize that my wishful thinking was incorrect, so that I realize what must be done to actually get the good things I thought I already had. It’s not necessary to appeal to material benefits; it’s necessary to appeal to current values.

But it does support the notion that you can’t persuade someone by belittling them. You won’t convince people to join your side by telling them that they are defective and bad and should feel guilty for being who they are.

If that seems obvious, well, maybe you should talk to some of the people who are constantly pushing “White privilege”. If you focused on how reducing racism would make people—even White people—better off, you’d probably be more effective. In some cases there would be direct material benefits: Racism creates inefficiency in markets that reduces overall output. But in other cases, sure, maybe there’s no direct benefit for the person you’re talking to; but you can talk about other sorts of benefits, like what sort of world they want to live in, or how proud they would feel to be part of the fight for justice. You can say all you want that they shouldn’t need this kind of persuasion, they should already believe and do the right thing—and you might even be right about that, in some ultimate sense—but do you want to change their minds or not? If you actually want to change their minds, you need to meet them where they are, make small changes, and offer benefits at each step.

If you don’t, you’ll just keep on projecting a vector orthogonally, and you’ll keep ending up with zero.

“If the projection of the new vector onto the old vector is longer than the old vector itself, you should accept the value change.”

Ok, then maybe you could be convinced to form an opinion on the collaboration between Vernon Neppe and Edward close? I think your idea here is very clear but the opportunity cost with changing ones mind, especially when adopting a new value system, can be immense. In this case it requires learning new forms of math and giving up materialism. As for what you gain? Insight.

As a small introduction you might try this page, which covers a 1965 proof of FLT using the division algorithm and three of its corollaries.

http://www.erclosetphysics.com/search?q=flt65&max-results=20&by-date=true

I am neither mathematician or physicist but it is clear their work is extremely creative. I would be curious to see if you find his proof convincing. Few have. My prediction based purely on psychology is that you havent the patience for strange ideas. The universe is a strange place, until we understand it. But if they are right no human mind will for many centuries to come…

LikeLike