JDN 2457447

One of the most baffling facts about the world, particularly to a development economist, is that the leading causes of death around the world broadly cluster into two categories: Obesity, in First World countries, and starvation, in Third World countries. At first glance, it seems like the rich are eating too much and there isn’t enough left for the poor.

Yet in fact it’s not quite so simple as that, because in fact obesity is most common among the poor in First World countries, and in Third World countries obesity rates are rising rapidly and co-existing with starvation. It is becoming recognized that there are many different kinds of obesity, and that a past history of starvation is actually a major risk factor in future obesity.

Indeed, the really fundamental problem is malnutrition—people are not necessarily eating too much or too little, they are eating the wrong things. So, my question is: Why?

It is widely thought that foods which are nutritious are also unappetizing, and conversely that foods which are delicious are unhealthy. There is a clear kernel of truth here, as a comparison of Brussels sprouts versus ice cream will surely indicate. But this is actually somewhat baffling. We are an evolved organism; one would think that natural selection would shape us so that we enjoy foods which are good for us and avoid foods which are bad for us.

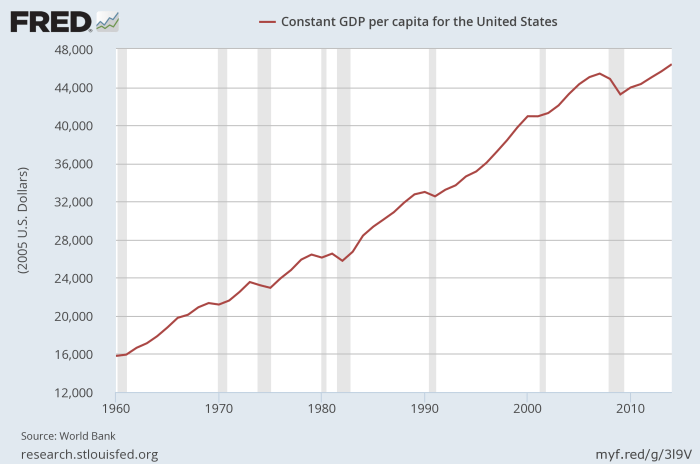

I think it did, actually; the problem is, we have changed our situation so drastically by means of culture and technology that evolution hasn’t had time to catch up. We have evolved significantly since the dawn of civilization, but we haven’t had any time to evolve since one event in particular: The Green Revolution. Indeed, many people are still alive today who were born while the Green Revolution was still underway.

The Green Revolution is the culmination of a long process of development in agriculture and industrialization, but it would be difficult to overstate its importance as an epoch in the history of our species. We now have essentially unlimited food.

Not literally unlimited, of course; we do still need land, and water, and perhaps most notably energy (oil-driven machines are a vital part of modern agriculture). But we can produce vastly more food than was previously possible, and food supply is no longer a binding constraint on human population. Indeed, we already produce enough food to feed 10 billion people. People who say that some new agricultural technology will end world hunger don’t understand what world hunger actually is. Food production is not the problem—distribution of wealth is the problem.

I often speak about the possibility of reaching post-scarcity in the future; but we have essentially already done so in the domain of food production. If everyone ate what would be optimally healthy, and we distributed food evenly across the world, there would be plenty of food to go around and no such thing as obesity or starvation.

So why hasn’t this happened? Well, the main reason, like I said, is distribution of wealth.

But that doesn’t explain why so many people who do have access to good foods nonetheless don’t eat them.

The first thing to note is that healthy food is more expensive. It isn’t a huge difference by First World standards—about $550 per year extra per person. But when we compare the cost of a typical nutritious diet to that of a typical diet, the nutritious diet is significantly more expensive. Worse yet, this gap appears to be growing over time.

But why is this the case? It’s actually quite baffling on its face. Nutritious foods are typically fruits and vegetables that one can simply pluck off plants. Unhealthy foods are typically complex processed foods that require machines and advanced technology. There should be “value added”, at least in the economic sense; additional labor must go in, additional profits must come out. Why is it cheaper?

In a word? Subsidies.

Somehow, huge agribusinesses have convinced governments around the world that they deserve to be paid extra money, either simply for existing or based on how much they produce. Of course, when I say “somehow”, I of course mean lobbying.

In the US, these subsidies overwhelmingly go toward corn, followed by cotton, followed by soybeans.

In fact, they don’t actually even go to corn as you would normally think of it, like sweet corn or corn on the cob. No, they go to feed corn—really awful stuff that includes the entire plant, is barely even recognizable as corn, and has its “quality” literally rated by scales and sieves. No living organism was ever meant to eat this stuff.

Humans don’t, of course. Cows do. But they didn’t evolve for this stuff either; they can’t digest it properly, and it’s because of this terrible food we force-feed them that they need so many antibiotics.

Thus, these corn subsides are really primarily beef subsidies—they are a means of externalizing the cost of beef production and keeping the price of hamburgers artificially low. In all, 2/3 of US agricultural subsidies ultimately go to meat production. I haven’t been able to find any really good estimates, but as a ballpark figure it seems that meat would cost about twice as much if we didn’t subsidize it.

Fortunately a lot of these subsidies have been decreased under the Obama administration, particularly “direct payments” which are sort of like a basic income, but for agribusinesses. (That is not what basic incomes are for.) You can see the decline in US corn subsidies here.

Despite all this, however, subsidies cannot explain obesity. Removing them would have only a small effect.

An often overlooked consideration is that nutritious food can be more expensive for a family even if the actual pricetag is the same.

Why? Because kids won’t eat it.

To raise kids on a nutritious diet, you have to feed them small amounts of good food over a long period of time, until they acquire the taste. In order to do this, you need to be prepared to waste a lot of food, and that costs money. It’s cheaper to simply feed them something unhealthy, like ice cream or hot dogs, that you know they’ll eat.

And this brings me to what I think is the real ultimate cause of our awful diet: We evolved for a world of starvation, and our bodies cannot cope with abundance.

It’s important to be clear about what we mean by “unhealthy food”; people don’t enjoy consuming lead and arsenic. Rather, we enjoy consuming fat and sugar. Contrary to what fad diets will tell you, fat and sugar are not inherently bad for human health; indeed, we need a certain amount of fat and sugar in order to survive. What we call “unhealthy food” is actually food that we desperately need—in small quantities.

Under the conditions in which we evolved, fat and sugar were extremely scarce. Eating fat meant hunting a large animal, which required the cooperation of the whole tribe (a quite literal Stag Hunt) and carried risk of life and limb, not to mention simply failing and getting nothing. Eating sugar meant finding fruit trees and gathering fruit from them—and fruit trees are not all that common in nature. These foods also spoil quite quickly, so you eat them right away or not at all.

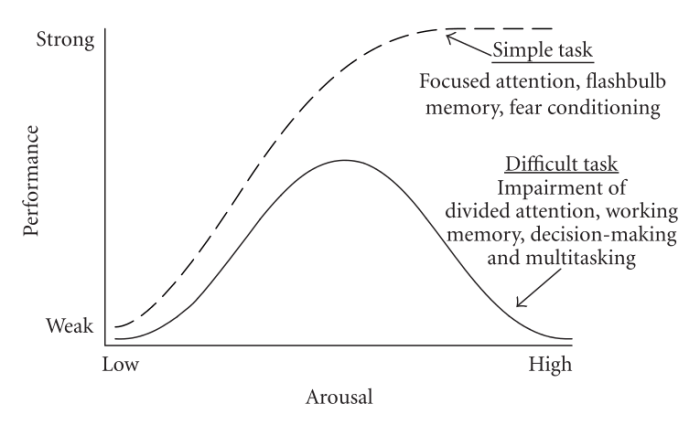

As such, we evolved to really crave these things, to ensure that we would eat them whenever they are available. Since they weren’t available all that often, this was just about right to ensure that we managed to eat enough, and rarely meant that we ate too much.

But now fast-forward to the Green Revolution. They aren’t scarce anymore. They’re everywhere. There are whole buildings we can go to with shelves upon shelves of them, which we ourselves can claim simply by swiping a little plastic card through a reader. We don’t even need to understand how that system of encrypted data networks operates, or what exactly is involved in maintaining our money supply (and most people clearly don’t); all we need to do is perform the right ritual and we will receive an essentially unlimited abundance of fat and sugar.

Even worse, this food is in processed form, so we can extract the parts that make it taste good, while separating them from the parts that actually make it nutritious. If fruits were our main source of sugar, that would be fine. But instead we get it from corn syrup and sugarcane, and even when we do get it from fruit, we extract the sugar instead of eating the whole fruit.

Natural selection had no particular reason to give us that level of discrimination; since eating apples and oranges was good for us, we evolved to like the taste of apples and oranges. There wasn’t a sufficient selection pressure to make us actually eat the whole fruit as opposed to extracting the sugar, because extracting the sugar was not an option available to our ancestors. But it is available to us now.

Vegetables, on the other hand, are also more abundant now, but were already fairly abundant. Indeed, it may be significant that we’ve had enough time to evolve since agriculture, but not enough time since fertilizer. Agriculture allowed us to make plenty of wheat and carrots; but it wasn’t until fertilizer that we could make enough hamburgers for people to eat them regularly. It could be that our hunter-gatherer ancestors actually did crave carrots in much the same way they and we crave sugar; but since agriculture we have no further reason to do so because carrots have always been widely available.

One thing I do still find a bit baffling: Why are so many green vegetables so bitter? It would be one thing if they simply weren’t as appealing as fat and sugar; but it honestly seems like a lot of green vegetables, such as broccoli, spinach, and Brussels sprouts, are really quite actively aversive, at least until you acquire the taste for them. Given how nutritious they are, it seems like there should have been a selective pressure in favor of liking the taste of green vegetables; but there wasn’t. I wonder if it’s actually coevolution—if perhaps broccoli has been evolving to not be eaten as quickly as we were evolving to eat it. This wouldn’t happen with apples and oranges, because in an evolutionary sense apples and oranges “want” to be eaten; they spread their seeds in the droppings of animals. But for any given stalk of broccoli, becoming lunch is definitely bad news.

Yet even this is pretty weird, because broccoli has definitely evolved substantially since agriculture—indeed, broccoli as we know it would not exist otherwise. Ancestral Brassica oleracea was bred to become cabbage, broccoli, cauliflower, kale, Brussels sprouts, collard greens, savoy, kohlrabi and kai-lan—and looks like none of them.

It looks like I still haven’t solved the mystery. In short, we get fat because kids hate broccoli; but why in the world do kids hate broccoli?