Mar 29 JDN 246129

When the preliminary data for our job markets over the past few months were released, they looked all right. But after more careful analysis and better data has allowed us to revise the figures and do more accurate seasonal adjustments, the results are really quite shocking:

The United States has lost more jobs than it created for the last six months.

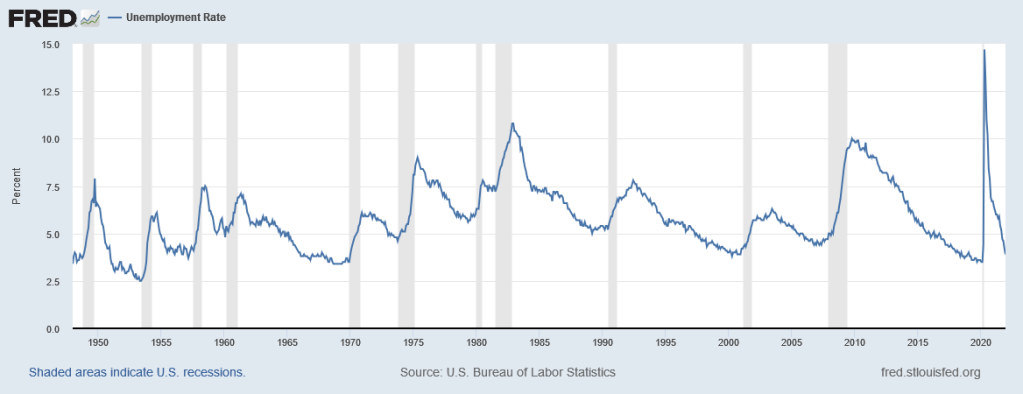

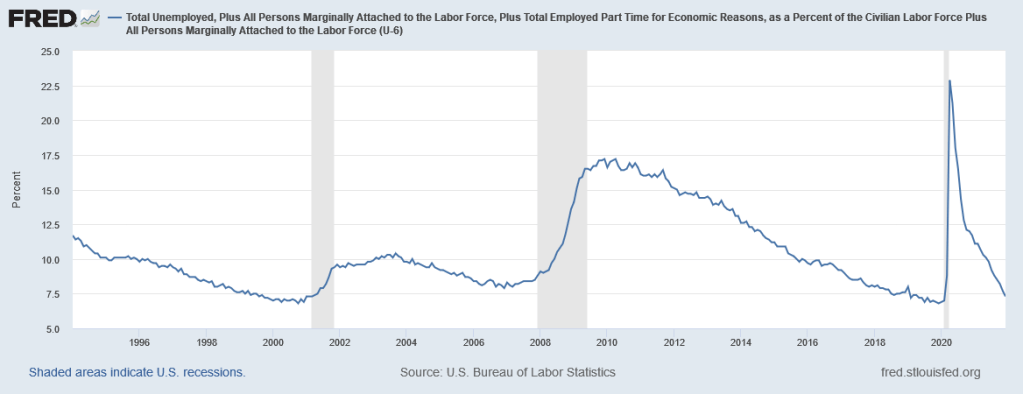

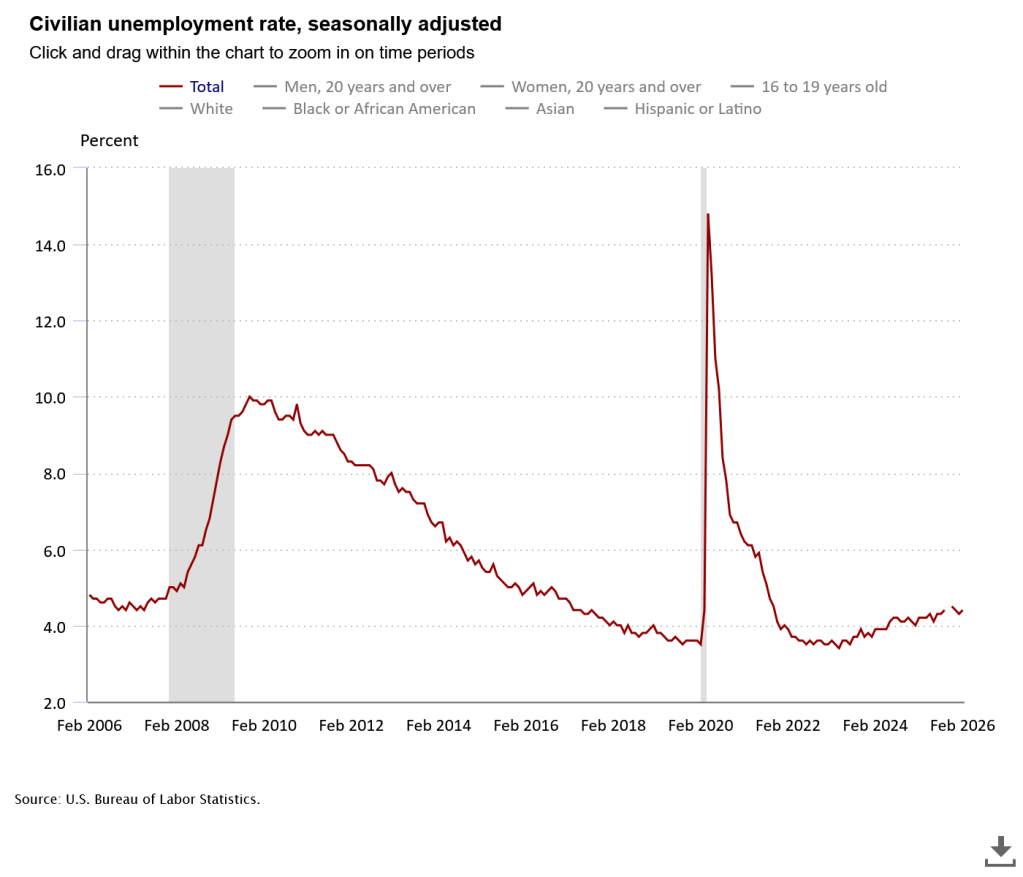

That is certainly something we’ve done before; it is indeed what tends to happen during recessions. But no recession has been declared, GDP seems to be growing normally, and unemployment still stands at a perfectly-reasonable 4.4%.

What’s going on here?

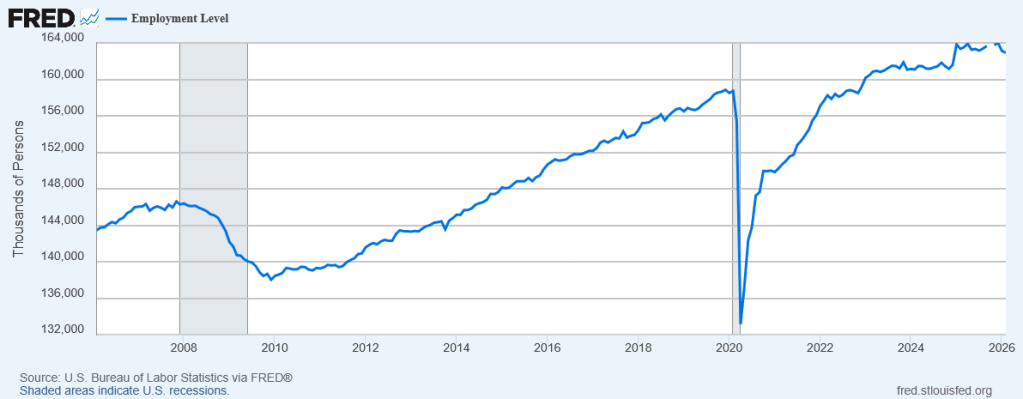

If you look at the employment level—the absolute number of people employed—it looks shockingly flat since 2023.

From 2009 to 2019, US employment grew from 138 million to 159 million, growing at 1.4% per year. Obviously it collapsed during the 2020 recession, but then it recovered to 158 million by the end of 2022. It now stands at 163 million, only 0.7% growth per year since 2022. Since January 2025 it has actually fallen from a peak of 164 million.

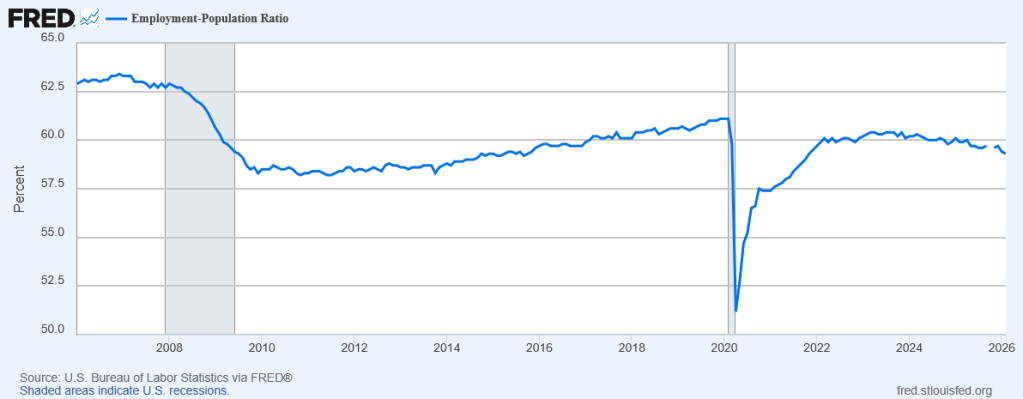

Because our population is growing (albeit not as much as it once was, because immigration has collapsed after Trump’s crackdowns), this actually looks even worse when you consider the employment rate, the ratio between the number of people employed and the total population:

US employment peaked at 61.1% just before the 2020 recession, and has still not recovered to that level. It reached 60% in 2022, stayed around there through 2024, and then since then has actually declined, now to 59.3%. In fact, it was even higher in 2007 before the other big recession of my adult life (you know, it’s starting to feel like the economy hates Millennials in particular), reaching 63.3% before crashing and never recovering.

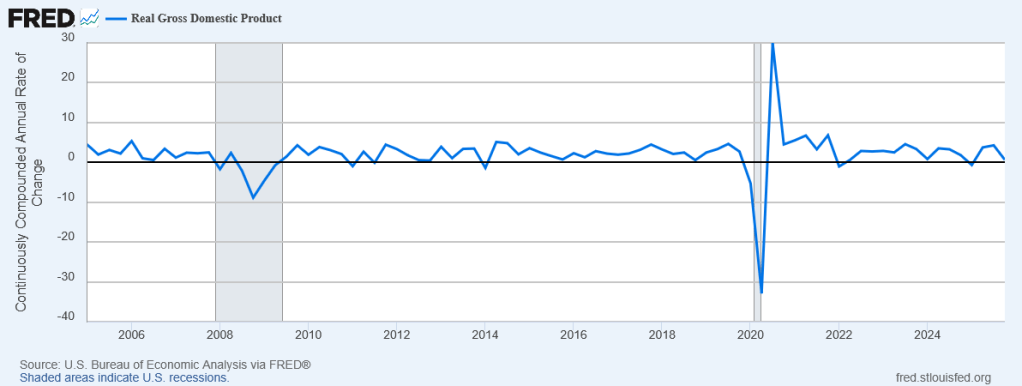

Yet our GDP growth looks fine!

Sure, it had a huge drop in the 2020 recession, but it grew very fast in the recovery, and since then has fluctuated a bit, but generally averaged about 2.5% per year—which is pretty good for a highly-developed country. We had negative growth in the first quarter of 2025 and slow growth in the fourth quarter, but the second and third quarter both had strong growth to make up for it. Overall real GDP growth for 2025 as a whole was a perfectly respectable 2.1%.

Even our unemployment rate looks fine—though with employment falling, it suggests more people are leaving the labor force instead of looking for jobs at all.

The only major industry that has actually shown strong employment growth over the last year is healthcare, growing 2.4%. Every other major industry grew 1% or less, or even shrank.

What would cause something like this?

This actually looks like what you’d expect to happen under technological unemployment: Productivity-enhancing technology allows GDP to increase even as employment falls.

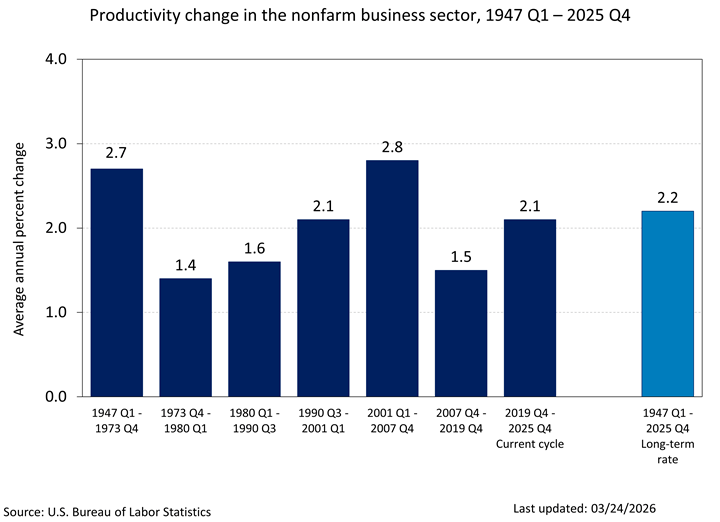

But we haven’t actually had a surge in productivity. The massive—utterly irresponsible—rollout of AI technology has shown little, if any, effect at improving productivity. 3% of effort saved really isn’t that much, especially since a lot of people seem to overestimate how much AI tools help them.

Overall, our productivity growth looks… pretty normal, by historical standards:

Instead, what actually seems to be happening is what we might call techno-hype unemployment: Employers think that a massive productivity surge is around the corner, and they’ve already stopped hiring in anticipation of that.

Maybe they’re not even wrong about that! There is now some evidence that while initial adoption of AI reduces productivity, eventually it may increase productivity. (But we really haven’t had it long enough to be sure.)

Unemployment isn’t rising very much, not because people are finding jobs, but because people who already have jobs are generally keeping them, while people who don’t have jobs are basically giving up.

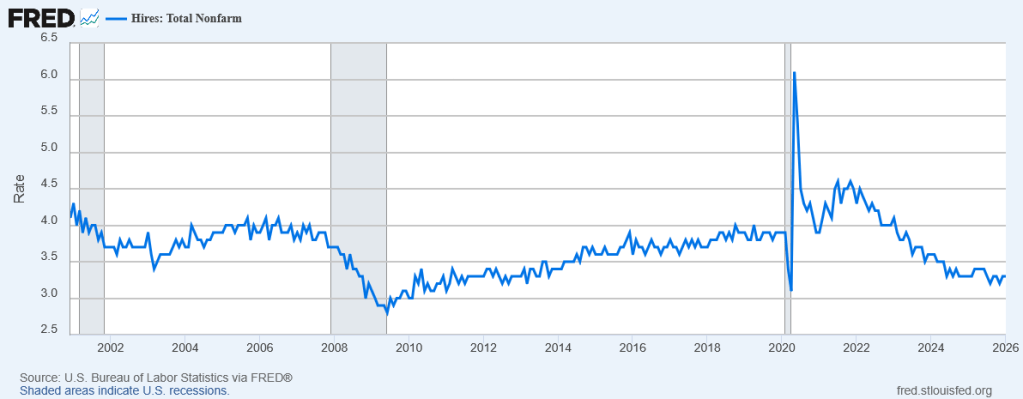

The hiring rate is now the lowest it has been since the 2020 recession—and not much higher than it was at the trough of the 2020 recession!

As far as I can tell, on our current path, one of two things will happen:

- The current paradigm of AI will work, and genuinely increase productivity.

- The current paradigm of AI will fail, and expected productivity gains will not materialize.

It turns out that neither possibility looks good for workers.

If AI succeeds, then businesses seem like they’re gonna just… stop hiring, especially entry-level positions that can be more readily replaced. People who already have senior positions may do just fine, or even make more money; but anyone fresh out of college, or even anyone whose career got derailed and is trying to start again, looks like they’ll just be… out of luck.

It’s every capitalist’s dream: To buy a machine that lets you never have to hire anyone ever again. And maybe, at last, they’ve found that Holy Grail.

On the other hand, if AI fails, the bubble will burst, the huge amount of investment that was previously driving the economy will suddenly dry up, and we will have a financial crisis and a recession. Businesses that were so sure they could replace their workers with AI will want to start hiring again, but won’t be able to, because no one can afford to buy anything and so nobody is making any revenue to pay employees with.

In many ways, the second one appears to be the preferable outcome, because at least it’s temporary. We would, sooner or later, recover from that recession and bring things back to normal. If AI ever actually works even half as well as most of the tech industry claims it will any minute, the most likely outcome seems to be launching us fully into a cyberpunk dystopia where a handful of trillionaires own everything and the rest of us struggle for scraps because our skills can now be replaced by machines.

This didn’t have to happen.

Even if AI is really going to be a transformational technology, we could have prepared for it better. We could have implemented policies that would ensure that people would continue to be provided for even as their labor was more and more replaced by machines. But that would have made the billionaires slightly less rich, and it sounded like “socialism” to ideologues, and the right-wing media convinced millions of people that even moving slightly in that direction would destroy all they held dear.

It’s not even too late! We could still turn it around, if those same people who stopped us from doing the right thing before weren’t still in charge of everything and richer than ever and just as effective as they ever were at deluding the masses.

I don’t know how to be optimistic about the future anymore. It feels like I’m watching the collapse of our entire civilization live in real time.