JDN 2457082 EDT 11:15.

Economists are often accused of assigning dollar values to everything, of being Oscar Wilde’s definition of a cynic, someone who knows the price of everything and the value of nothing. And there is more than a little truth to this, particularly among neoclassical economists; I was alarmed a few days ago to receive an email response from an economist that included the word ‘altruism’ in scare quotes as though this were somehow a problematic or unrealistic concept. (Actually, altruism is already formally modeled by biologists, and my claim that human beings are altruistic would be so uncontroversial among evolutionary biologists as to be considered trivial.)

But sometimes this accusation is based upon things economists do that is actually tremendously useful, even necessary to good policymaking: We make everything quantitative. Nothing is ever “yes” or “no” to an economist (sometimes even when it probably should be; the debate among economists in the 1960s over whether slavery is economically efficient does seem rather beside the point), but always more or less; never good or bad but always better or worse. For example, as I discussed in my post on minimum wage, the mainstream position among economists is not that minimum wage is always harmful nor that minimum wage is always beneficial, but that minimum wage is a policy with costs and benefits that on average neither increases nor decreases unemployment. The mainstream position among economists about climate policy is that we should institute either a high carbon tax or a system of cap-and-trade permits; no economist I know wants us to either do nothing and let the market decide (a position most Republicans currently seem to take) or suddenly ban coal and oil (the latter is a strawman position I’ve heard environmentalists accused of, but I’ve never actually heard advocated; even Greenpeace wants to ban offshore drilling, not oil in general.).

This makes people uncomfortable, I think, because they want moral issues to be simple. They want “good guys” who are always right and “bad guys” who are always wrong. (Speaking of strawman environmentalism, a good example of this is Captain Planet, in which no one ever seems to pollute the environment in order to help people or even in order to make money; no, they simply do it because the hate clean water and baby animals.) They don’t want to talk about options that are more good or less bad; they want one option that is good and all other options that are bad.

This attitude tends to become infused with righteousness, such that anyone who disagrees is an agent of the enemy. Politics is the mind-killer, after all. If you acknowledge that there might be some downside to a policy you agree with, that’s like betraying your team.

But in reality, the failure to acknowledge downsides can lead to disaster. Problems that could have been prevented are instead ignored and denied. Getting the other side to recognize the downsides of their own policies might actually help you persuade them to your way of thinking. And appreciating that there is a continuum of possibilities that are better and worse in various ways to various degrees is what allows us to make the world a better place even as we know that it will never be perfect.

There is a common refrain you’ll hear from a lot of social justice activists which sounds really nice and egalitarian, but actually has the potential to completely undermine the entire project of social justice.

This is the idea that oppression can’t be measured quantitatively, and we shouldn’t try to compare different levels of oppression. The notion that some people are more oppressed than others is often derided as the Oppression Olympics. (Some use this term more narrowly to mean when a discussion is derailed by debate over who has it worse—but then the problem is really discussions being derailed, isn’t it?)

This sounds nice, because it means we don’t have to ask hard questions like, “Which is worse, sexism or racism?” or “Who is worse off, people with cancer or people with diabetes?” These are very difficult questions, and maybe they aren’t the right ones to ask—after all, there’s no reason to think that fighting racism and fighting sexism are mutually exclusive; they can in fact be complementary. Research into cancer only prevents us from doing research into diabetes if our total research budget is fixed—this is more than anything else an argument for increasing research budgets.

But we must not throw out the baby with the bathwater. Oppression is quantitative. Some kinds of oppression are clearly worse than others.

Why is this important? Because otherwise you can’t measure progress. If you have a strictly qualitative notion of oppression where it’s black-and-white, on-or-off, oppressed-or-not, then we haven’t made any progress on just about any kind of oppression. There is still racism, there is still sexism, there is still homophobia, there is still religious discrimination. Maybe these things will always exist to some extent. This makes the fight for social justice a hopeless Sisyphean task.

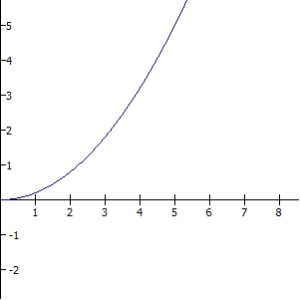

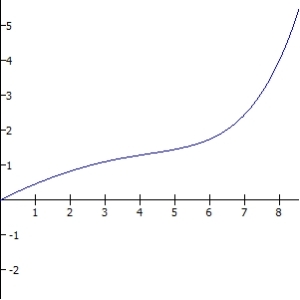

But in fact, that’s not true at all. We’ve made enormous progress. Unbelievably fast progress. Mind-boggling progress. For hundreds of millennia humanity made almost no progress at all, and then in the last few centuries we have suddenly leapt toward justice.

Sexism used to mean that women couldn’t own property, they couldn’t vote, they could be abused and raped with impunity—or even beaten or killed for being raped (which Saudi Arabia still does by the way). Now sexism just means that women aren’t paid as well, are underrepresented in positions of power like Congress and Fortune 500 CEOs, and they are still sometimes sexually harassed or raped—but when men are caught doing this they go to prison for years. This change happened in only about 100 years. That’s fantastic.

Racism used to mean that Black people were literally property to be bought and sold. They were slaves. They had no rights at all, they were treated like animals. They were frequently beaten to death. Now they can vote, hold office—one is President!—and racism means that our culture systematically discriminates against them, particularly in the legal system. Racism used to mean you could be lynched; now it just means that it’s a bit harder to get a job and the cops will sometimes harass you. This took only about 200 years. That’s amazing.

Homophobia used to mean that gay people were criminals. We could be sent to prison or even executed for the crime of making love in the wrong way. If we were beaten or murdered, it was our fault for being faggots. Now, homophobia means that we can’t get married in some states (and fewer all the time!), we’re depicted on TV in embarrassing stereotypes, and a lot of people say bigoted things about us. This has only taken about 50 years! That’s astonishing.

And above all, the most extreme example: Religious discrimination used to mean you could be burned at the stake for not being Catholic. It used to mean—and in some countries still does mean—that it’s illegal to believe in certain religions. Now, it means that Muslims are stereotyped because, well, to be frank, there are some really scary things about Muslim culture and some really scary people who are Muslim leaders. (Personally, I think Muslims should be more upset about Ahmadinejad and Al Qaeda than they are about being profiled in airports.) It means that we atheists are annoyed by “In God We Trust”, but we’re no longer burned at the stake. This has taken longer, more like 500 years. But even though it took a long time, I’m going to go out on a limb and say that this progress is wonderful.

Obviously, there’s a lot more progress remaining to be made on all these issues, and others—like economic inequality, ableism, nationalism, and animal rights—but the point is that we have made a lot of progress already. Things are better than they used to be—a lot better—and keeping this in mind will help us preserve the hope and dedication necessary to make things even better still.

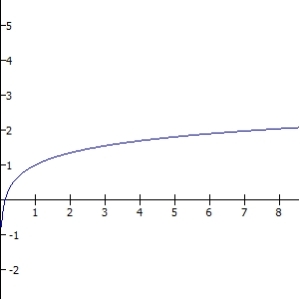

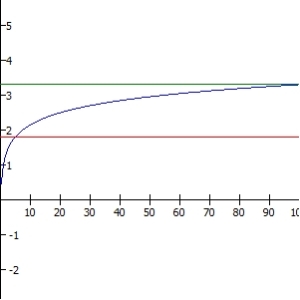

If you think that oppression is either-or, on-or-off, you can’t celebrate this progress, and as a result the whole fight seems hopeless. Why bother, when it’s always been on, and will probably never be off? But we started with oppression that was absolutely horrific, and now it’s considerably milder. That’s real progress. At least within the First World we have gone from 90% oppressed to 25% oppressed, and we can bring it down to 10% or 1% or 0.1% or even 0.01%. Those aren’t just numbers, those are the lives of millions of people. As democracy spreads worldwide and poverty is eradicated, oppression declines. Step by step, social changes are made, whether by protest marches or forward-thinking politicians or even by lawyers and lobbyists (they aren’t all corrupt).

And indeed, a four-year-old Black girl with a mental disability living in Ghana whose entire family’s income is $3 a day is more oppressed than I am, and not only do I have no qualms about saying that, it would feel deeply unseemly to deny it. I am not totally unoppressed—I am a bisexual atheist with chronic migraines and depression in a country that is suspicious of atheists, systematically discriminates against LGBT people, and does not make proper accommodations for chronic disorders, particularly mental ones. But I am far less oppressed, and that little girl (she does exist, though I know not her name) could be made much less oppressed than she is even by relatively simple interventions (like a basic income). In order to make her fully and totally unoppressed, we would need such a radical restructuring of human society that I honestly can’t really imagine what it would look like. Maybe something like The Culture? Even then as Iain Banks imagines it, there is inequality between those within The Culture and those outside it, and there have been wars like the Idiran-Culture War which killed billions, and among those trillions of people on thousands of vast orbital habitats someone, somewhere is probably making a speciesist remark. Yet I can state unequivocally that life in The Culture would be better than my life here now, which is better than the life of that poor disabled girl in Ghana.

To be fair, we can’t actually put a precise number on it—though many economists try, and one of my goals is to convince them to improve their methods so that they stop using willingness-to-pay and instead try to actually measure utility by something like QALY. A precise number would help, actually—it would allow us to do cost-benefit analyses to decide where to focus our efforts. But while we don’t need a precise number to tell when we are making progress, we do need to acknowledge that there are degrees of oppression, some worse than others.

Oppression is quantitative. And our goal should be minimizing that quantity.