May 24 JDN 2461185

Content warning: Algebra

In 2018, David Graeber published a book called Bullshit Jobs, positing that the transition of our economy from industrial manufacturing to ‘post-industrial’ services was in fact largely a transition from meaningful jobs that do actual work to meaningless jobs that employ people without contributing to society.

He made the point vividly in this article for Strike magazine:

For instance: in our society, there seems a general rule that, the more obviously one’s work benefits other people, the less one is likely to be paid for it. Again, an objective measure is hard to find, but one easy way to get a sense is to ask: what would happen were this entire class of people to simply disappear? Say what you like about nurses, garbage collectors, or mechanics, it’s obvious that were they to vanish in a puff of smoke, the results would be immediate and catastrophic. A world without teachers or dock-workers would soon be in trouble, and even one without science fiction writers or ska musicians would clearly be a lesser place. It’s not entirely clear how humanity would suffer were all private equity CEOs, lobbyists, PR researchers, actuaries, telemarketers, bailiffs or legal consultants to similarly vanish. (Many suspect it might markedly improve.) Yet apart from a handful of well-touted exceptions (doctors), the rule holds surprisingly well.

I think his claim is a little overstated, but the basic pattern does seem valid: Less and less labor is spent actually making things (or even really doing things) and more and more is spent on selling things and advertising things and suing over things.

While the pattern seems genuine, it’s a little hard to verify empirically, as the usual BLS categories include both useful and useless work in the same categories: “Sales and office occupations” includes far too many things to be useful.

But I think Graeber’s explanations of where the pattern comes from are pretty much totally wrong. He attributes it to “office feudalism” where bosses want to act like lords and “make-work” from government policy trying to create jobs without regard for whether those jobs are useful. I’m not saying these things never happen, but it’s not enough to explain a massive economic transition.

Rather, with a little bit of economic theory, I think it’s not hard to see that these “bullshit jobs” are actually rent-seeking; they don’t produce anything for society, but they are genuinely profitable for the companies that hire them—and thus it’s perfectly rational for a profit-maximizing corporation to behave in this way.

Here’s a simple model to demonstrate the idea.

Suppose there are two corporations, Zero Inc. and One Corp, which both produce cars.

Each factory worker produces r cars. (Presumably they really produce some fraction 1/n of n r cars, but it works out the same.) The labor productivity of factory workers is thus r.

Each factory worker receives a wage wf, which is outside the control of these two corporations (it’s set by a larger labor market).

This means that the marginal cost of producing a car is wf/r. As productivity increases, producing cars becomes cheaper.

The two corporations are in Cournot competition, so they face a total demand for cars that looks like this:

p = a – b (q0 + q1)

where q0 is how many cars Zero produces and q1 is how many cars One produces, and a and b are parameters. This is exogenous; it’s determined by broader market conditions, not by anything the corporations can control.

I’ll spare you the algebra, but this has a well-known equilibrium solution; the two corporations produce the same amount and charge the same price:

q0 = q1 = (a – wf/r)/(3b)

p = (a + 2wf/r)/3

Their profits are also the same, naturally:

F0 = F1 = (p – wf/r)q0 = (a – wf/r)2/(9b)

The number of workers employed making cars is:

L = q/r = 2(a – wf/r)/(3br)

Because the numerator increases with r but so does the denominator, it isn’t obvious whether this is increasing or decreasing in r; more productivity may result in either more or fewer factory workers employed.

By taking the derivative, this can be shown:

dL/dr > 0 iff a > 2w/r

This means that at relatively low levels of productivity, more productivity will increase employment of factory workers; but at high levels of productivity, it will decrease it.

Since profits are proportional to (a – wf/r)2, the higher profits are, the more likely it is that additional productivity will reduce employment of factory workers.

But what if factory workers aren’t the only workers each corporation can employ?

Suppose now that there is another kind of worker they can employ, salesmen.

Zero employs s0 salesmen, while One employs s1. Salesmen are paid a wage ws.

Salesmen don’t actually make anything. They produce no real output. Instead, they allow each firm to take a larger share of the market.

To model this, I’ll use a contest function (I used a similar model in some posts in 2020), where the actual quantity each corporation produces and sells is proportional to the relative number of salesmen employed at each corporation:

q0 = (s0)/(s0 + s1) q

I’ll assume that the total amount of cars produced and sold is the same as before. (Dropping this assumption makes the model much more complicated and hard to solve, without significantly changing the overall conclusions.)

Each corporation’s profits now depend on both how many cars they produce and how many salesmen they hire:

F0 = p q0 – wf/r q0 – ws s0

Again I will spare you the algebra, but it comes out like this:

s0 = s1 = (a – wf/r)2 / (18 b ws)

Unlike employment of factory workers, which may increase or decrease as productivity increases, employment of salesmen always increases.

Thus, as productivity gets higher and higher, we should expect employment of factory workers (“real jobs”) decreasing as employment of salesmen (“bullshit jobs”) increases—even though corporations are being completely rational and maximizing their profits.

Let’s put some numbers on this to make the example more concrete.

Suppose at the start that wf = 1, ws = 2, a = 10, b = 1, and r = 1. (Consider production in cars per day, wages as annual salaries, and money in units of $10,000.)

Then each corporation produces 3 cars per day:

q0 = q1 = (10 – 1/1)/(3*1) = 3

The price of a car is $40,000:

p = (10 + 2*1/1)/3 = 4

The number of factory workers employed is 6:

L = q/r = (3+3)/1

The number of salesmen employed is 4.5 (maybe one is employed half-time):

s = s0 + s1 = (10 – 1/1)2 / (9*1*2) = 81/18 = 4.5

So the majority of workers are factory workers.

But now suppose that productivity greatly increases, to r=10, with everything else the same:

The number of cars produced at each corporation per day increases to 3.3:

q0 = q1 = (10 – 1/10)/(3*1) = 3.3

The price of a car falls to $34,000:

p = (10 + 2*1/10)/3 = 3.4

The number of factory workers employed falls dramatically; there isn’t even one full-time job available, only part-time jobs:

L = (3.3+3.3)/10 = 0.66

But the number of salesmen increases to 5.445 (one additional full-time worker):

s = (10 – 1/10)2 / (9*1*2) = 5.445

Thus, while factory workers used to be 6/(6+4.5) = 57% of the workforce, now they are only 0.66/(0.66+5.445) = 10.8% of the workforce. And this happened because they got more productive!

This is of course a very simple model, but the basic pattern fits:

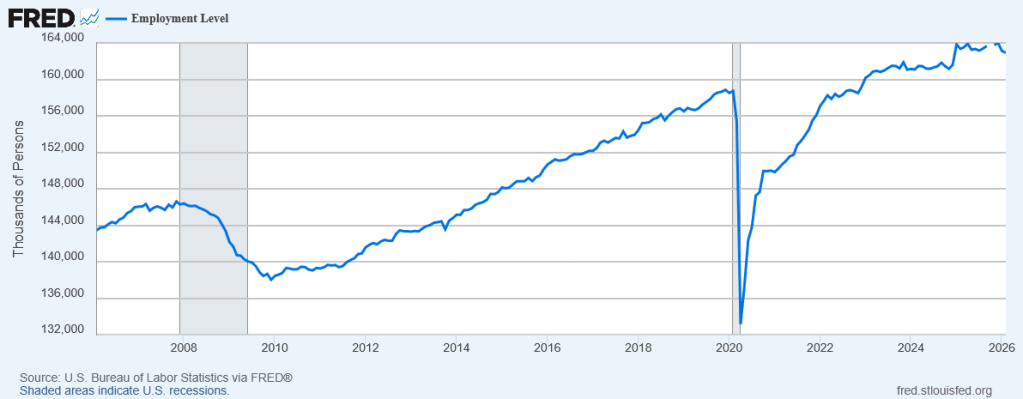

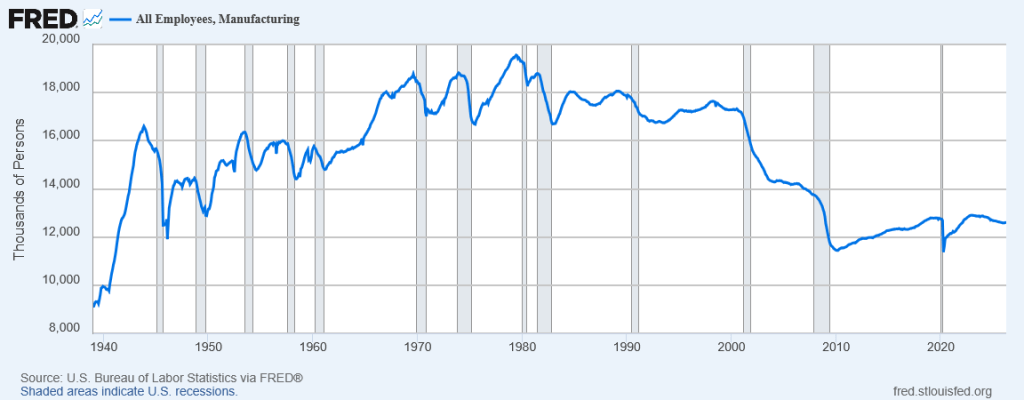

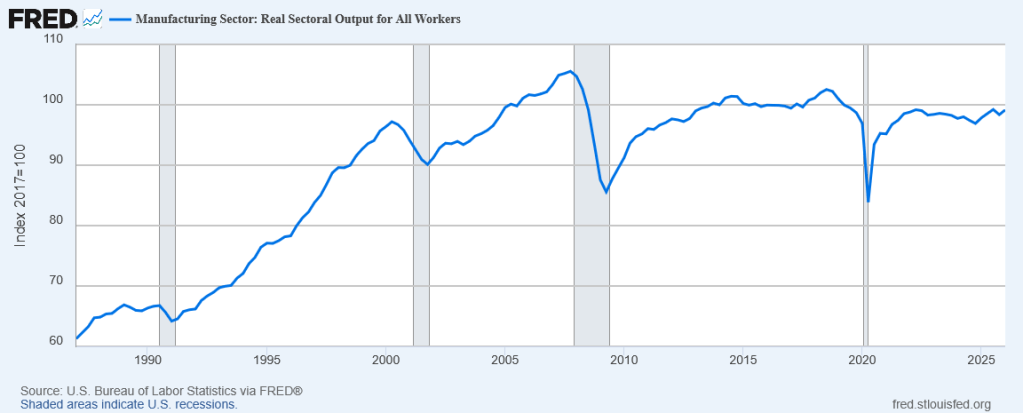

The share of employment that’s in manufacturing has greatly declined since 2000:

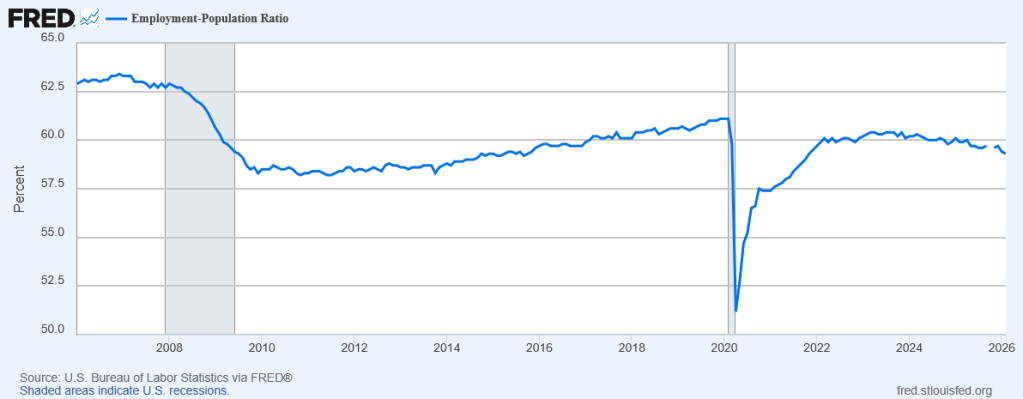

Yet manufacturing output hasn’t really changed:

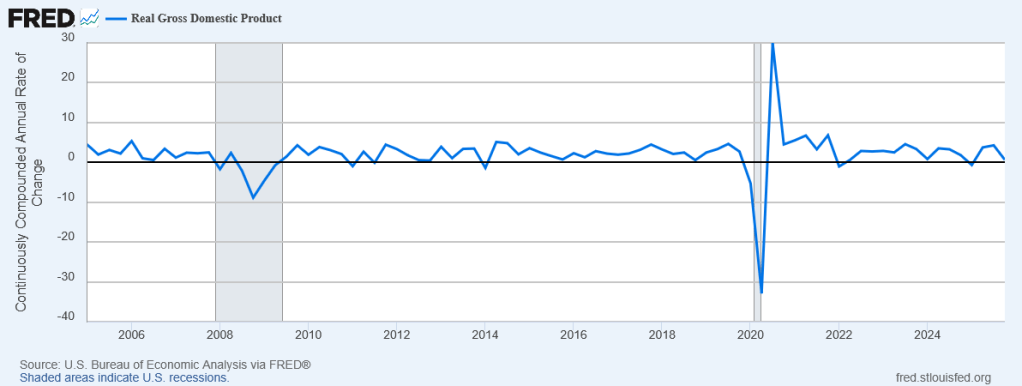

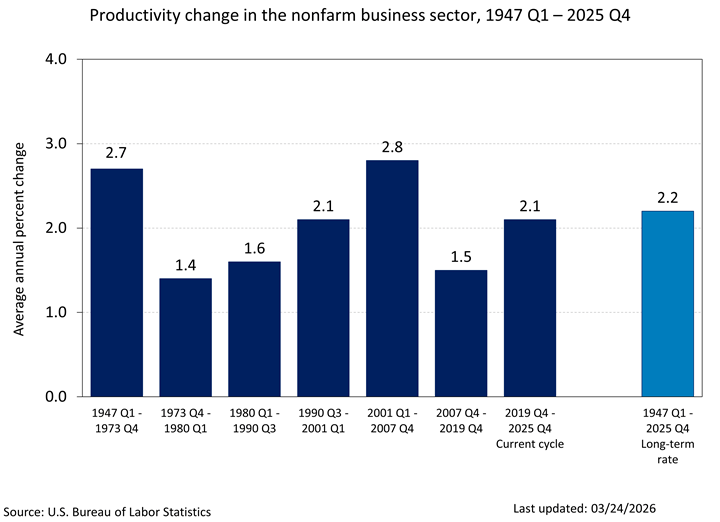

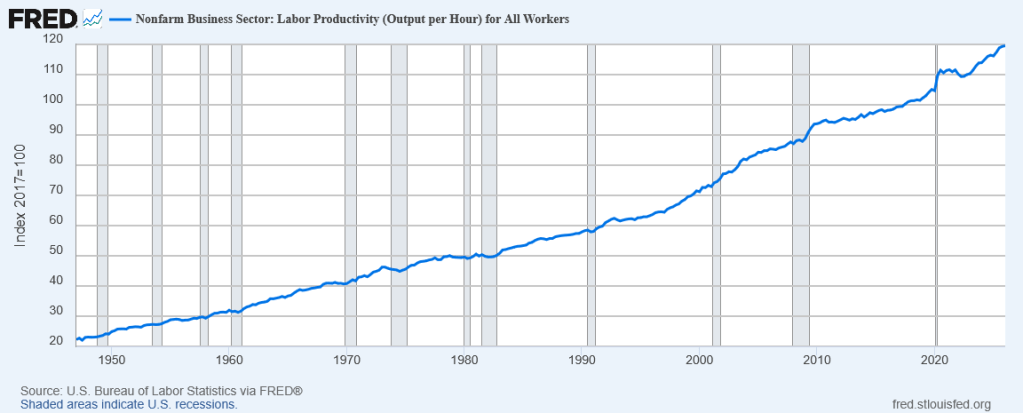

The reason is that labor productivity has continued to rise:

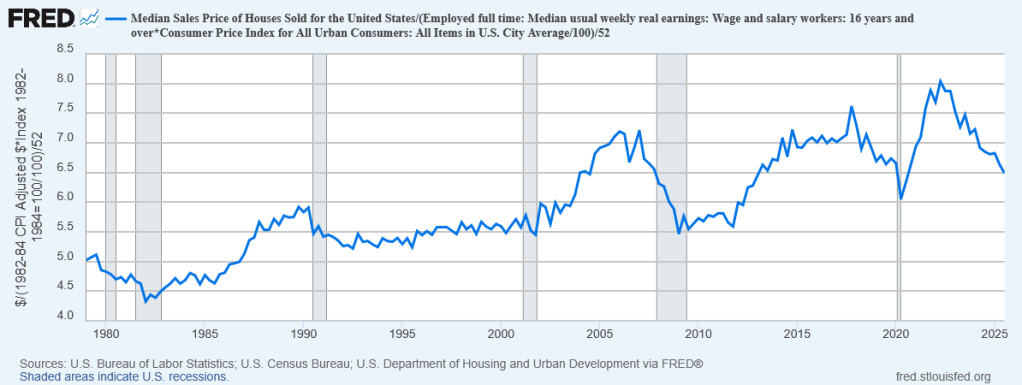

In my model I assumed that factory worker wages haven’t changed, and, well, they really haven’t, despite large increases in productivity. In real terms, a factory worker today makes about 70% more than one in 1950, despite producing nearly six times as much output.

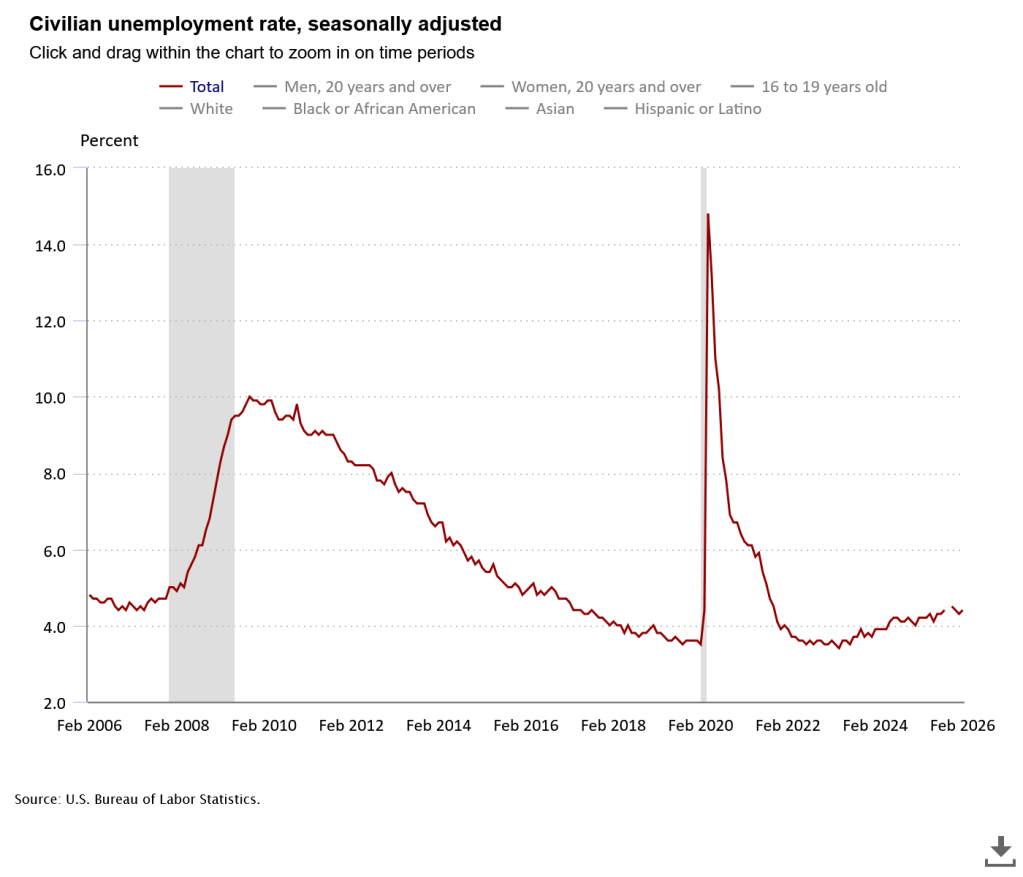

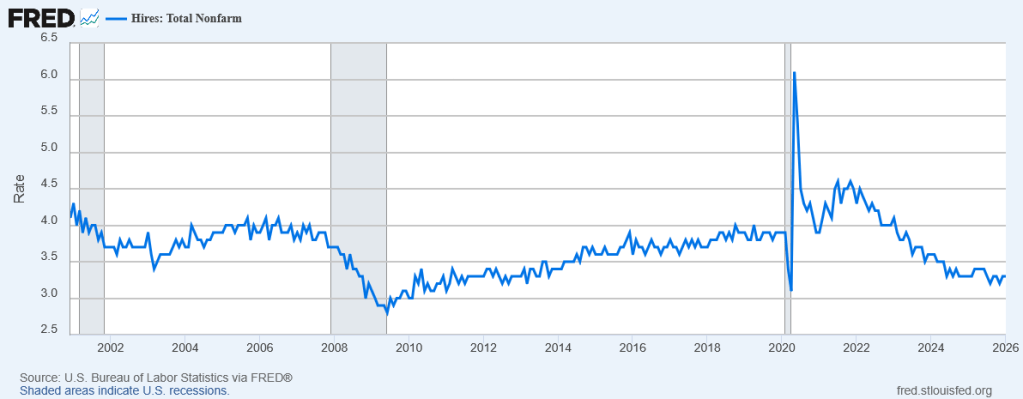

Producing the same amount of stuff now requires fewer people—so fewer people are employed in making stuff. They then end up employed somewhere else; and one of the more profitable ways to employ them these days is in rent-seeking activities like sales and advertising.

What should we do about this?

I already said what I wanted to do years ago: Tax advertising.