JDN 2457513—May 4, 2016

May the Fourth be with you.

In honor of International Star Wars Day, this post is going to be about Star Wars!

[I wanted to include some images from Star Wars, but here are the copyright issues that made me decide it ultimately wasn’t a good idea.]

But this won’t be as frivolous as it may sound. Star Wars has a lot of important lessons to teach us about economics and other social sciences, and its universal popularity gives us common ground to start with. I could use Zimbabwe and Botswana as examples, and sometimes I do; but a lot of people don’t know much about Zimbabwe and Botswana. A lot more people know about Tatooine and Naboo, so sometimes it’s better to use those instead.

In fact, this post is just a small sample of a much larger work to come; several friends of mine who are social scientists in different fields (I am of course the economist, and we also have a political scientist, a historian, and a psychologist) are writing a book about this; we are going to use Star Wars as a jumping-off point to explain some real-world issues in social science.

So, my topic for today, which may end up forming the basis for a chapter of the book, is quite simple:

Why is Tatooine poor?

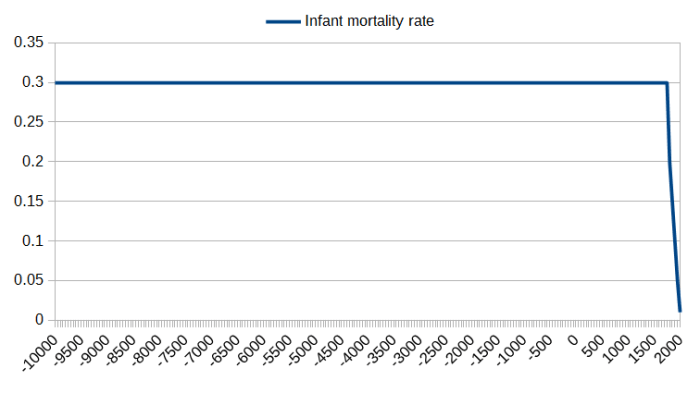

First, let me explain why this is such a mystery to begin with. We’re so accustomed to poverty being in the world that we expect to see it, we think of it as normal—and for most of human history, that was probably the correct attitude to have. Up until at least the Industrial Revolution, there simply was no way of raising the standard of living of most people much beyond bare subsistence. A wealthy few could sometimes live better, and most societies have had such an elite; but it was never more than about 1% of the population—and sometimes as little as 0.01%. They could have distributed wealth more evenly than they did, but there simply wasn’t that much to go around.

The “prosperous” “democracy” of Periclean Athens for example was really an aristocratic oligarchy, in which the top 1%—the ones who could read and write, and hence whose opinions we read—owned just about everything (including a fair number of the people—slavery). Their “democracy” was a voting system that only applied to a small portion of the population.

But now we live in a very different age, the Information Age, where we are absolutely basking in wealth thanks to enormous increases in productivity. Indeed, the standard of living of an Athenian philosopher was in many ways worse than that of a single mother on Welfare in the United States today; certainly the single mom has far better medicine, communication, and transportation than the philosopher, but she may even have better nutrition and higher education. Really the only things I can think of that the philosopher has more of are jewelry and real estate. The single mom also surely spends a lot more time doing housework, but a good chunk of her work is automated (dishwasher, microwave, washing machine), while the philosopher simply has slaves for that sort of thing. The smartphone in her pocket (81% of poor households in the US have a cellphone, and about half of these are smartphones) and the car in her driveway (75% of poor households in the US own at least one car) may be older models in disrepair, but they would still be unimaginable marvels to that ancient philosopher.

How is it, then, that we still have poverty in this world? Even if we argued that the poverty line in First World countries is too high because they have cars and smartphones (not an argument I agree with by the way—given our enormous productivity there’s no reason everyone shouldn’t have a car and a smartphone, and the main thing that poor people still can’t afford is housing), there are still over a billion people in the world today who live on less than $2 per day in purchasing-power-adjusted real income. That is poverty, no doubt about it. Indeed, it may in fact be a lower standard of living than most human beings had when we were hunter-gatherers. It may literally be a step downward from the Paleolithic.

Here is where Tatooine may give us some insights.

Productivity in the Star Wars universe is clearly enormous; indeed the proportional gap between Star Wars and us appears to be about the same as the proportional gap between us and hunter-gatherer times. The Death Star II had a diameter of 160 kilometers. Its cost is listed as “over 1 trillion credits”, but that’s almost meaningless because we have no idea what the exchange rate is or how the price of spacecraft varies relative to the price of other goods. (Spacecraft actually seem to be astonishingly cheap; in A New Hope it seems to be that a drink is a couple of credits while 10,000 credits is almost enough to buy an inexpensive starship. Basically their prices seem to be similar to ours for most goods, but spaceships are so cheap they are priced like cars instead of like, well, spacecraft.)

So let’s look at it another way: How much metal would it take to build such a thing, and how much would that cost in today’s money?

We actually see quite a bit of the inner structure of the Death Star II in Return of the Jedi, so I can hazard a guess that about 5% of the volume of the space station is taken up by solid material. Who knows what it’s actually made out of, but for a ballpark figure let’s assume it’s high-grade steel. The volume of a 160 km diameter sphere is 4*pi*r^3 = 4*(3.1415)*(80,000)^3 = 6.43 quadrillion cubic meters. If 5% is filled with material, that’s 320 trillion cubic meters. High-strength steel has a density of about 8000 kg/m^3, so that’s 2.6 quintillion kilograms of steel. A kilogram of high-grade steel costs about $2, so we’re looking at $5 quintillion as the total price just for the raw material of the Death Star II. That’s $5,000,000,000,000,000,000. I’m not even including the labor (droid labor, that is) and transportation costs (oh, the transportation costs!), so this is a very conservative estimate.

To get a sense of how ludicrously much money this is, the population of Coruscant is said to be over 1 trillion people, which is just about plausible for a city that covers an entire planet. The population of the entire galaxy is supposed to be about 400 quadrillion.

Suppose that instead of building the Death Star II, Emperor Palpatine had decided to give a windfall to everyone on Coruscant. How much would he have given each person (in our money)? $5 million.

Suppose instead he had offered the windfall to everyone in the galaxy? $12.50 per person. That’s 50 million worlds with an average population of 8 billion each. Instead of building the Death Star II, Palpatine could have bought the whole galaxy lunch.

Put another way, the cost I just estimated for the Death Star II is about 60 million times the current world GDP. So basically if the average world in the Empire produced as much as we currently produce on Earth, there would still not be enough to build that thing. In order to build the Death Star II in secret, it must be a small portion of the budget, maybe 5% tops. In order for only a small number of systems to revolt, the tax rates can’t be more than say 50%, if that; so total economic output on the average world in the Empire must in fact be more like 50 times what it is on Earth today, for a comparable average population. This puts their per-capita GDP somewhere around $500,000 per person per year.

So, economic output is extremely high in the Star Wars universe. Then why is Tatooine poor? If there’s enough output to make basically everyone a millionaire, why haven’t they?

In a word? Power.

Political power is of course very unequally distributed in the Star Wars universe, especially under the Empire but also even under the Old Republic and New Republic.

Core Worlds like Coruscant appear to have fairly strong centralized governments, and at least until the Emperor seized power and dissolved the Senate (as Tarkin announces in A New Hope) they also seemed to have fairly good representation in the Galactic Senate (though how you make a functioning Senate with millions of member worlds I have no idea—honestly, maybe they didn’t). As a result, Core Worlds are prosperous. Actually, even Naboo seems to be doing all right despite being in the Mid Rim, because of their strong and well-managed constitutional monarchy (“elected queen” is not as weird as it sounds—Sweden did that until the 16th century). They often talk about being a “democracy” even though they’re technically a constitutional monarchy—but the UK and Norway do the same thing with if anything less justification.

But Outer Rim Worlds like Tatooine seem to be out of reach of the central galactic government. (Oh, by the way, what hyperspace route drops you off at Tatooine if you’re going from Naboo to Coruscant? Did they take a wrong turn in addition to having engine trouble? “I knew we should have turned left at Christophsis!”) They even seem to be out of range of the monetary system (“Republic credits are no good out here,” said Watto in The Phantom Menace.), which is pretty extreme. That doesn’t usually happen—if there is a global hegemon, usually their money is better than gold. (“good as gold” isn’t strong enough—US money is better than gold, and that’s why people will accept negative real interest rates to hold onto it.) I guarantee you that if you want to buy something with a US $20 bill in Somalia or Zimbabwe, someone will take it. They might literally take it—i.e. steal it from you, and the government may not do anything to protect you—but it clearly will have value.

So, the Outer Rim worlds are extremely isolated from the central government, and therefore have their own local institutions that operate independently. Tatooine in particular appears to be controlled by the Hutts, who in turn seem to have a clan-based system of organized crime, similar to the Mafia. We never get much detail about the ins and outs of Hutt politics, but it seems pretty clear that Jabba is particularly powerful and may actually be the de facto monarch of a sizeable region or even the whole planet.

Jabba’s government is at the very far extreme of what Daron Acemoglu calls extractive regimes (I’ve been reading his tome Why Nations Fail, and while I agree with its core message, honestly it’s not very well-written or well-argued), systems of government that exist not to achieve overall prosperity or the public good, but to enrich a small elite few at the expense of everyone else. The opposite is inclusive regimes, under which power is widely shared and government exists to advance the public good. Real-world systems are usually somewhere in between; the US is still largely inclusive, but we’ve been getting more extractive over the last few decades and that’s a big problem.

Jabba himself appears to be fantastically wealthy, although even his huge luxury hover-yacht (…thing) is extremely ugly and spartan inside. I infer that he could have made it look however he wanted, and simply has baffling tastes in decor. The fact that he seems to be attracted to female humanoids is already pretty baffling, given the obvious total biological incompatibility; so Jabba is, shall we say, a weird dude. Eccentricity is quite common among despots of extractive regimes, as evidenced by Muammar Qaddafi’s ostentatious outfits, Idi Amin’s love of oranges and Kentucky Fried Chicken, and Kim Jong-Un’s fear of barbers and bond with Dennis Rodman. Maybe we would all be this eccentric if we had unlimited power, but our need to fit in with the rest of society suppresses it.

It’s difficult to put a figure on just how wealthy Jabba is, but it isn’t implausible to say that he has a million times as much as the average person on Tatooine, just as Bill Gates has a million times as much as the average person in the US. Like Qaddafi, before he was killed he probably feared that establishing more inclusive governance would only reduce his power and wealth and spread it to others, even if it did increase overall prosperity.

It’s not hard to make the figures work out so that is so. Suppose that for every 1% of the economy that is claimed by a single rentier despot, overall economic output drops by the same 1%. Then for concreteness, suppose that at optimal efficiency, the whole economy could produce $1 trillion. The amount of money that the despot can claim is determined by the portion he tries to claim, p, times the total amount that the economy will produce, which is (1-p) trillion dollars. So the despot’s wealth will be maximized when p(1-p) is maximized, which is p = 1/2; so the despot would maximize his own wealth at $250 billion if he claimed half of the economy, even though that also means that the economy produces half as much as it could. If he loosened his grip and claimed a smaller share, millions of his subjects would benefit; but he himself would lose more money than he gained. (You can also adjust these figures so that the “optimal” amount for the despot to claim is larger or smaller than half, depending on how severely the rent-seeking disrupts overall productivity.)

It’s important to note that it is not simply geography (galactography?) that makes Tatooine poor. Their sparse, hot desert may be less productive agriculturally, but that doesn’t mean that Tatooine is doomed to poverty. Indeed, today many of the world’s richest countries (such as Qatar) are in deserts, because they produce huge quantities of oil.

I doubt that oil would actually be useful in the Old Republic or the Empire, but energy more generally seems like something you’d always need. Tatooine has enormous flat desert plains and two suns, meaning that its potential to produce solar energy has to be huge. They couldn’t export the energy directly of course, but they could do so indirectly—the cheaper energy could allow them to build huge factories and produce starships at a fraction of the cost that other planets do. They could then sell these starships as exports and import water from planets where it is abundant like Naboo, instead of trying to produce their own water locally through those silly (and surely inefficient) moisture vaporators.

But Jabba likely has fought any efforts to invest in starship production, because it would require a more educated workforce that’s more likely to unionize and less likely to obey his every command. He probably has established a high tariff on water imports (or even banned them outright), so that he can maintain control by rationing the water supply. (Actually one thing I would have liked to see in the movies was Jabba being periodically doused by slaves with vats of expensive imported water. It would not only show an ostentatious display of wealth for a desert culture, but also serve the much more mundane function of keeping his sensitive gastropod skin from dangerously drying out. That’s why salt kills slugs, after all.) He also probably suppressed any attempt to establish new industries of any kind of Tatooine, fearing that with new industry could come a new balance of power.

The weirdest part to me is that the Old Republic didn’t do something about it. The Empire, okay, sure; they don’t much care about humanitarian concerns, so as long as Tatooine is paying its Imperial taxes and staying out of the Emperor’s way maybe he leaves them alone. But surely the Republic would care that this whole planet of millions if not billions of people is being oppressed by the Hutts? And surely the Republic Navy is more than a match for whatever pitiful military forces Jabba and his friends can muster, precisely because they haven’t established themselves as the shipbuilding capital of the galaxy? So why hasn’t the Republic deployed a fleet to Tatooine to unseat the Hutts and establish democracy? (It could be over pretty fast; we’ve seen that one good turbolaser can destroy Jabba’s hover-yacht—and it looks big enough to target from orbit.)

But then, we come full circle, back to the real world: Why hasn’t the US done the same thing in Zimbabwe? Would it not actually work? We sort of tried it in Libya—a lot of people died, and results are still pending I guess. But doesn’t it seem like we should be doing something?