Apr 11 JDN 2459316

Many situations in the real world involve matching people to other people: Dating, job hunting, college admissions, publishing, organ donation.

Alvin Roth won his Nobel Prize for his work on matching algorithms. I have nothing to contribute to improving his algorithm; what baffles me is that we don’t use it more often. It would probably feel too impersonal to use it for dating; but why don’t we use it for job hunting or college admissions? (We do use it for organ donation, and that has saved thousands of lives.)

In this post I will be looking at matching in a somewhat different way. Using a simple model, I’m going to illustrate some of the reasons why it is so painful and frustrating to try to match and keep getting rejected.

Suppose we have two sets of people on either side of a matching market: X and Y. I’ll denote an arbitrarily chosen person in X as x, and an arbitrarily chosen person in Y as y. There’s no reason the two sets can’t have overlap or even be the same set, but making them different sets makes the model as general as possible.

Each person in X wants to match with a person in Y, and vice-versa. But they don’t merely want to accept any possible match; they have preferences over which matches would be better or worse.

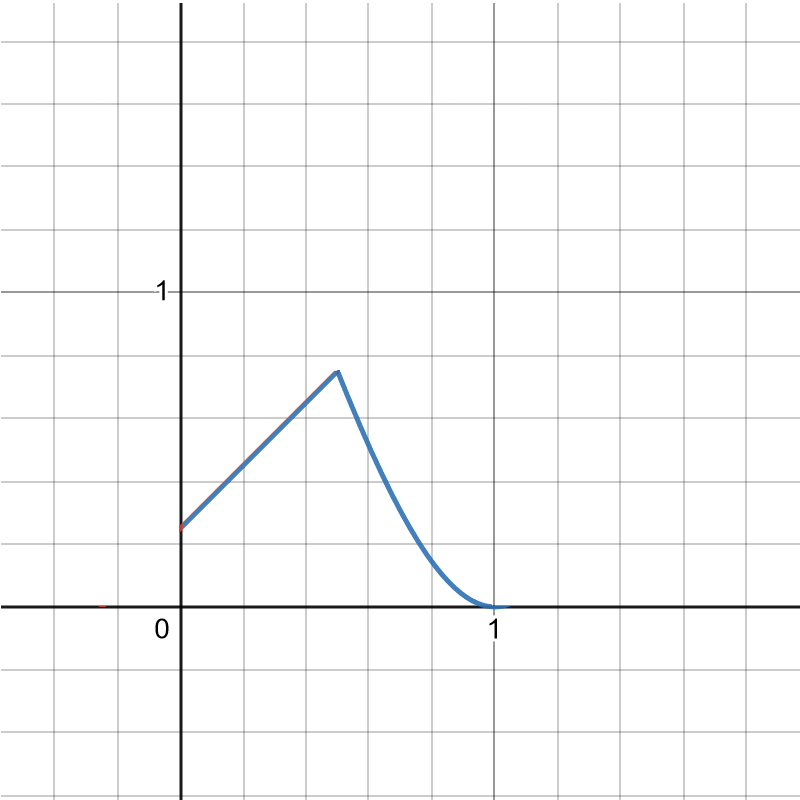

In general, we could say that people have some kind of utility function: Ux:Y->R and Uy:X->R that maps from possible match partners to the utility of such a match. But that gets very complicated very fast, because it raises the question of when you should keep searching, and when you should stop searching and accept what you have. (There’s a whole literature of search theory on this.)

For now let’s take the simplest possible case, and just say that there are some matches each person will accept, and some they will reject. This can be seen as a special case where the utility functions Ux and Uy always yield a result of 1 (accept) or 0 (reject).

This defines a set of acceptable partners for each person: A(x) is the set of partners x will accept: {y in Y|Ux(y) = 1} and A(y) is the set of partners y will accept: {x in X|Uy(x) = 1}

Then, the set of mutual matches than x can actually get is the set of ys that x wants, which also want x back: M(x) = {y in A(x)|x in A(y)}

Whereas, the set of mutual matches that y can actually get is the set of xs that y wants, which also want y back: M(y) = {x in A(y)|y in A(x)}

This relation is mutual by construction: If x is in M(y), then y is in M(x).

But this does not mean that the sets must be the same size.

For instance, suppose that there are three people in X, x1, x2, x3, and three people in Y, y1, y2, y3.

Let’s say that the acceptable matches are as follows:

A(x1) = {y1, y2, y3}

A(x2) = {y2, y3}

A(x3) = {y2, y3}

A(y1) = {x1,x2,x3}

A(y2) = {x1,x2}

A(y3) = {x1}

This results in the following mutual matches:

M(x1) = {y1, y2, y3}

M(y1) = {x1}

M(x2) = {y2}

M(y2) = {x1, x2}

M(x3) = {}

M(y3) = {x1}

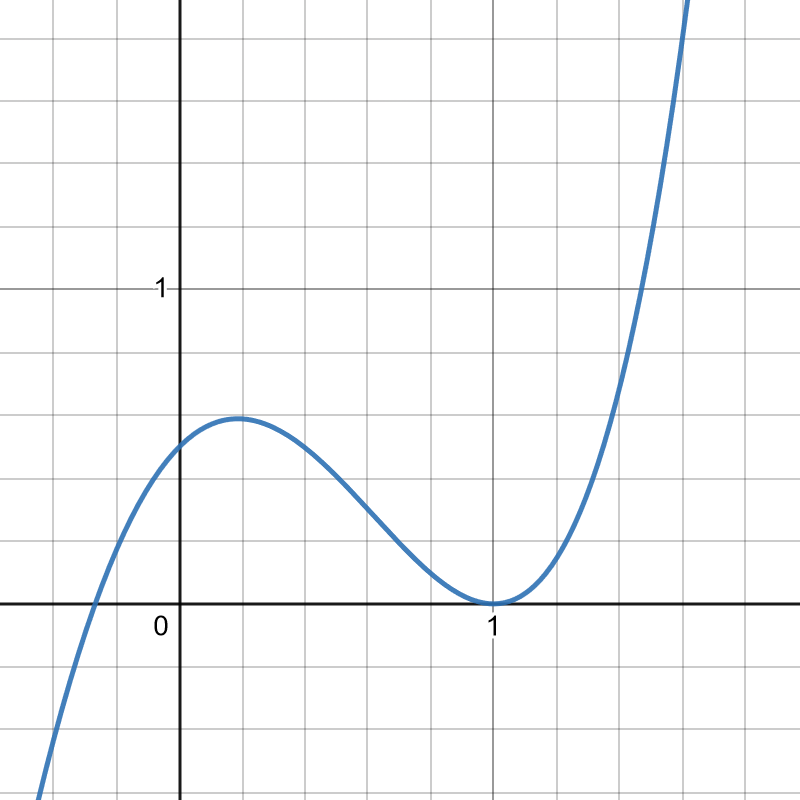

x1 can match with whoever they like; everyone wants to match with them. x2 can match with y2. But x3, despite having the same preferences as x2, and being desired by y3, can’t find any mutual matches at all, because the one person who wants them is a person they don’t want.

y1 can only match with x1, but the same is true of y3. So they will be fighting over x1. As long as y2 doesn’t also try to fight over x1, x2 and y2 will be happy together. Yet x3 will remain alone.

Note that the number of mutual matches has no obvious relation with the number of individually acceptable partners. x2 and x3 had the same number of acceptable partners, but x2 found a mutual match and x3 didn’t. y1 was willing to accept more potential partners than y3, but got the same lone mutual match in the end. y3 was only willing to accept one partner, but will get a shot at x1, the one that everyone wants.

One thing is true: Adding another acceptable partner will never reduce your number of mutual matches, and removing one will never increase it. But often changing your acceptable partners doesn’t have any effect on your mutual matches at all.

Now let’s consider what it must feel like to be x1 versus x3.

For x1, the world is their oyster; they can choose whoever they want and be guaranteed to get a match. Life is easy and simple for them; all they have to do is decide who they want most and that will be it.

For x3, life is an endless string of rejection and despair. Every time they try to reach out to suggest a match with someone, they are rebuffed. They feel hopeless and alone. They feel as though no one would ever actually want them—even though in fact there is someone who wants them, it’s just not someone they were willing to consider.

This is of course a very simple and small-scale model; there are only six people in it, and they each only say yes or no. Yet already I’ve got x1 who feels like a rock star and x3 who feels utterly hopeless if not worthless.

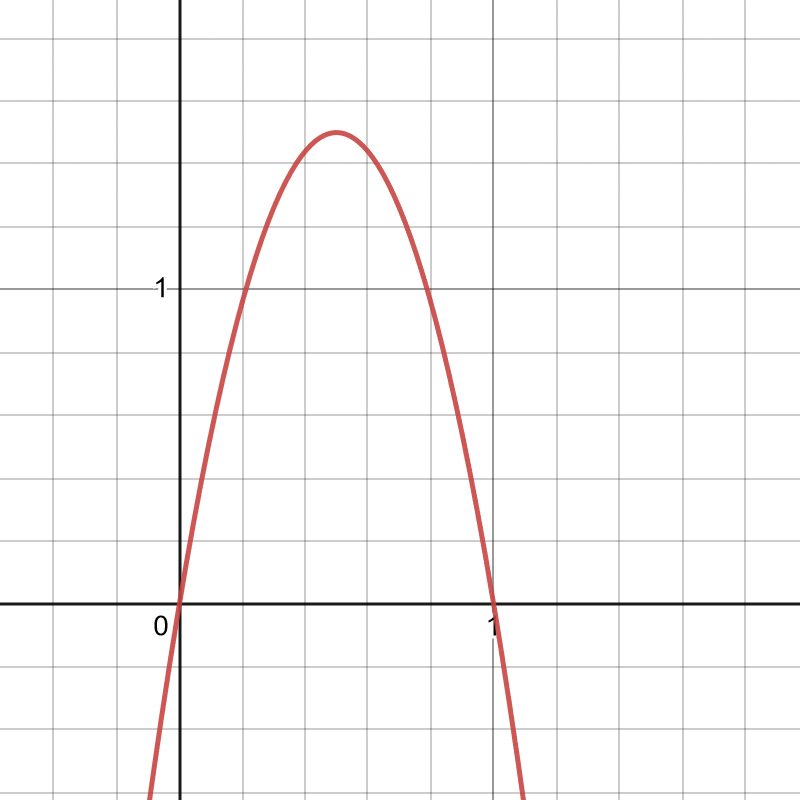

In the real world, there are so many more people in the system that the odds that no one is in your mutual match set are negligible. Almost everyone has someone they can match with. But some people have many more matches than others, and that makes life much easier for the ones with many matches and much harder for the ones with fewer.

Moreover, search costs then become a major problem: Even knowing that in all probability there is a match for you somewhere out there, how do you actually find that person? (And that’s not even getting into the difficulty of recognizing a good match when you see it; in this simple model you know immediately, but in the real world it can take a remarkably long time.)

If we think of the acceptable partner sets as preferences, they may not be within anyone’s control; you want what you want. But if we instead characterize them as decisions, the results are quite different—and I think it’s easy to see them, if nothing else, as the decision of how high to set your standards.

This raises a question: When we are searching and not getting matches, should we lower our standards and add more people to our list of acceptable partners?

This simple model would seem to say that we should always do that—there’s no downside, since the worst that can happen is nothing. And x3 for instance would be much happier if they were willing to lower their standards and accept y1. (Indeed, if they did so, there would be a way to pair everyone off happily: x1 with y3, x2 with y2, and x3 with y1.)

But in the real world, searching is often costly: There is at least the involved, and often a literal application or submission fee; but perhaps worst of all is the crushing pain of rejection. Under those circumstances, adding another acceptable partner who is not a mutual match will actually make you worse off.

That’s pretty much what the job market has been for me for the last six months. I started out with the really good matches: GiveWell, the Oxford Global Priorities Institute, Purdue, Wesleyan, Eastern Michigan University. And after investing considerable effort into getting those applications right, I made it as far as an interview at all those places—but no further.

So I extended my search, applying to dozens more places. I’ve now applied to over 100 positions. I knew that most of them were not good matches, because there simply weren’t that many good matches to be found. And the result of all those 100 applications has been precisely 0 interviews. Lowering my standards accomplished absolutely nothing. I knew going in that these places were not a good fit for me—and it looks like they all agreed.

It’s possible that lowering my standards in some different way might have worked, but even this is not clear: I’ve already been willing to accept much lower salaries than a PhD in economics ought to entitle, and included positions in my search that are only for a year or two with no job security, and applied to far-flung locales across the globe that I don’t know if I’d really be willing to move to.

Honestly at this point I’ve only been using the following criteria: (1) At least vaguely related to my field (otherwise they wouldn’t want me anyway), (2) a higher salary than I currently get as a grad student (otherwise why bother?), (3) a geographic location where homosexuality is not literally illegal and an institution that doesn’t actively discriminate against LGBT employees (this rules out more than you’d think—there are at least three good postings I didn’t apply to on these grounds), (4) in a region that speaks a language I have at least some basic knowledge of (i.e. preferably English, but also allowing Spanish, French, German, or Japanese) (5) working conditions that don’t involve working more than 40 hours per week (which has severely detrimental health effects, even ignoring my disability which would compound the effects), and (6) not working for a company that is implicated in large-scale criminal activity (as a remarkable number of major banks have in fact been implicated). I don’t feel like these are unreasonably high standards, and yet so far I have failed to land a match.

What’s more, the entire process has been emotionally devastating. While others seem to be suffering from pandemic burnout, I don’t think I’ve made it that far; I think I’d be just as burnt out even if there were no pandemic, simply from how brutal the job market has been.

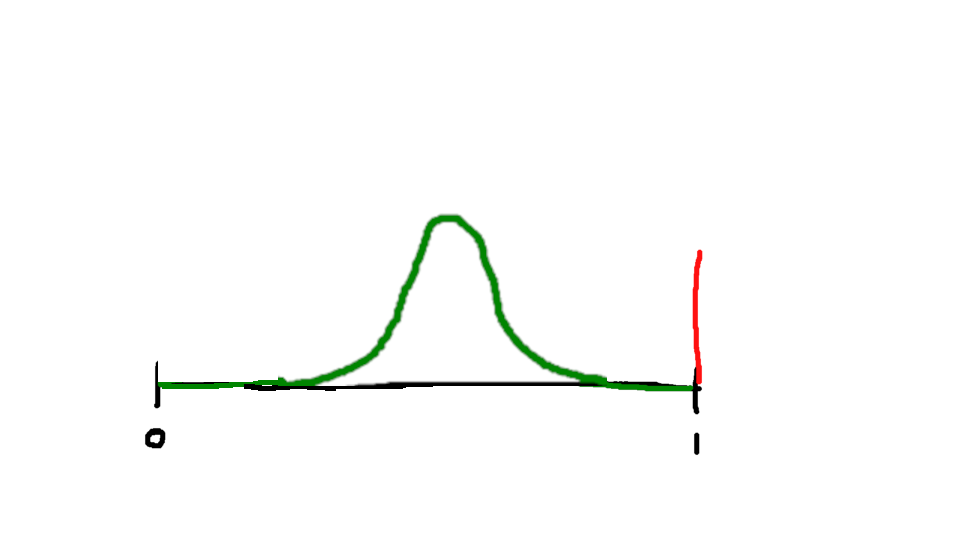

Why does rejection hurt so much? Why does being turned down for a date, or a job, or a publication feel so utterly soul-crushing? When I started putting together this model I had hoped that thinking of it in terms of match-sets might actually help reduce that feeling, but instead what happened is that it offered me a way of partly explaining that feeling (much as I did in my post on Bayesian Impostor Syndrome).

What is the feeling of rejection? It is the feeling of expending search effort to find someone in your acceptable partner set—and then learning that you were not in their acceptable partner set, and thus you have failed to make a mutual match.

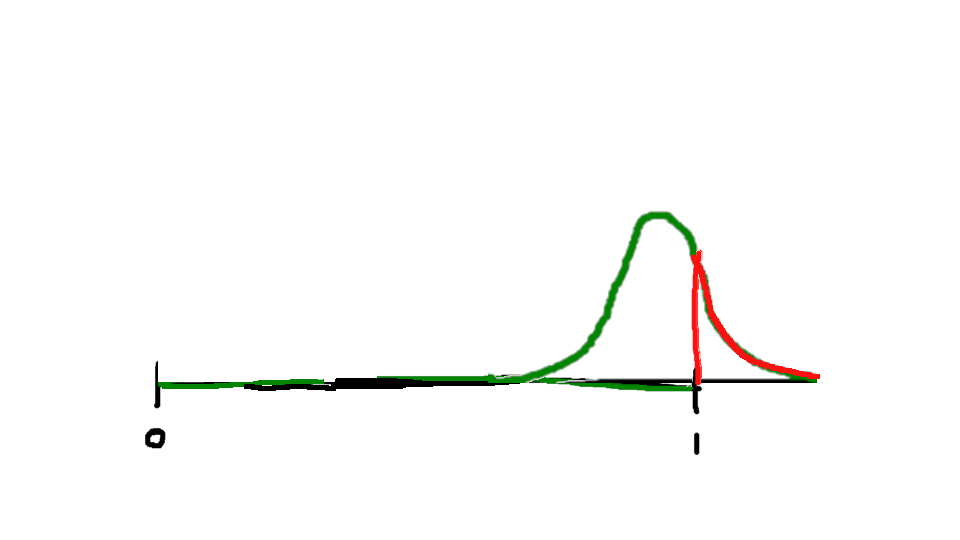

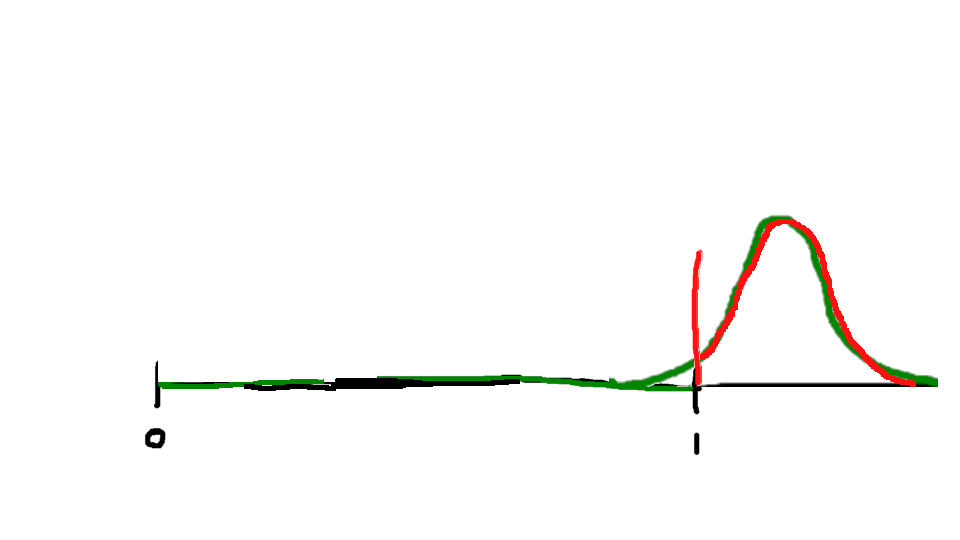

I said earlier that x1 feels like a rock star and x3 feels hopeless. This is because being present in someone else’s acceptable partner set is a sign of status—the more people who consider you an acceptable partner, the more you are “worth” in some sense. And when it’s something as important as a romantic partner or a career, that sense of “worth” is difficult to circumscribe into a particular domain; it begins to bleed outward into a sense of your overall self-worth as a human being.

Being wanted by someone you don’t want makes you feel superior, like they are “beneath” you; but wanting someone who doesn’t want you makes you feel inferior, like they are “above” you. And when you are applying for jobs in a market with a Beveridge Curve as skewed as ours, or trying to get a paper or a book published in a world flooded with submissions, you end up with a lot more cases of feeling inferior than cases of feeling superior. In fact, I even applied for a few jobs that I felt were “beneath” my level—they didn’t take me either, perhaps because they felt I was overqualified.

In such circumstances, it’s hard not to feel like I am the problem, like there is something wrong with me. Sometimes I can convince myself that I’m not doing anything wrong and the market is just exceptionally brutal this year. But I really have no clear way of distinguishing that hypothesis from the much darker possibility that I have done something terribly wrong that I cannot correct and will continue in this miserable and soul-crushing fruitless search for months or even years to come. Indeed, I’m not even sure it’s actually any better to know that you did everything right and still failed; that just makes you helpless instead of defective. It might be good for my self-worth to know that I did everything right; but it wouldn’t change the fact that I’m in a miserable situation I can’t get out of. If I knew I were doing something wrong, maybe I could actually fix that mistake in the future and get a better outcome.

As it is, I guess all I can do is wait for more opportunities and keep trying.