Jan 21 JDN 2460332

When this post goes live, I will have just passed my 36th birthday. (That means I’ve lived for about 1.1 billion seconds, so in order to be as rich as Elon Musk, I’d need to have made, on average, since birth, $200 per second—$720,000 per hour.)

I certainly feel a lot better turning 36 than I did 35. I don’t have any particular additional accomplishments to point to, but my life has already changed quite a bit, in just that one year: Most importantly, I quit my job at the University of Edinburgh, and I am currently in the process of moving out of the UK and back home to Michigan. (We moved the cat over Christmas, and the movers have already come and taken most of our things away; it’s really just us and our luggage now.)

But I still don’t know how to field the question that people have been asking me since I announced my decision to do this months ago:

“What’s next?”

I’m at a crossroads now, trying to determine which path to take. Actually maybe it’s more like a roundabout; it has a whole bunch of different paths, surely not just two or three. The road straight ahead is labeled “stay in academia”; the others at the roundabout are things like “freelance writing”, “software programming”, “consulting”, and “tabletop game publishing”. There’s one well-paved and superficially enticing road that I’m fairly sure I don’t want to take, labeled “corporate finance”.

Right now, I’m just kind of driving around in circles.

Most people don’t seem to quit their jobs without a clear plan for where they will go next. Often they wait until they have another offer in hand that they intend to take. But when I realized just how miserable that job was making me, I made the—perhaps bold, perhaps courageous, perhaps foolish—decision to get out as soon as I possibly could.

It’s still hard for me to fully understand why working at Edinburgh made me so miserable. Many features of an academic career are very appealing to me. I love teaching, I like doing research; I like the relatively flexible hours (and kinda need them, because of my migraines).

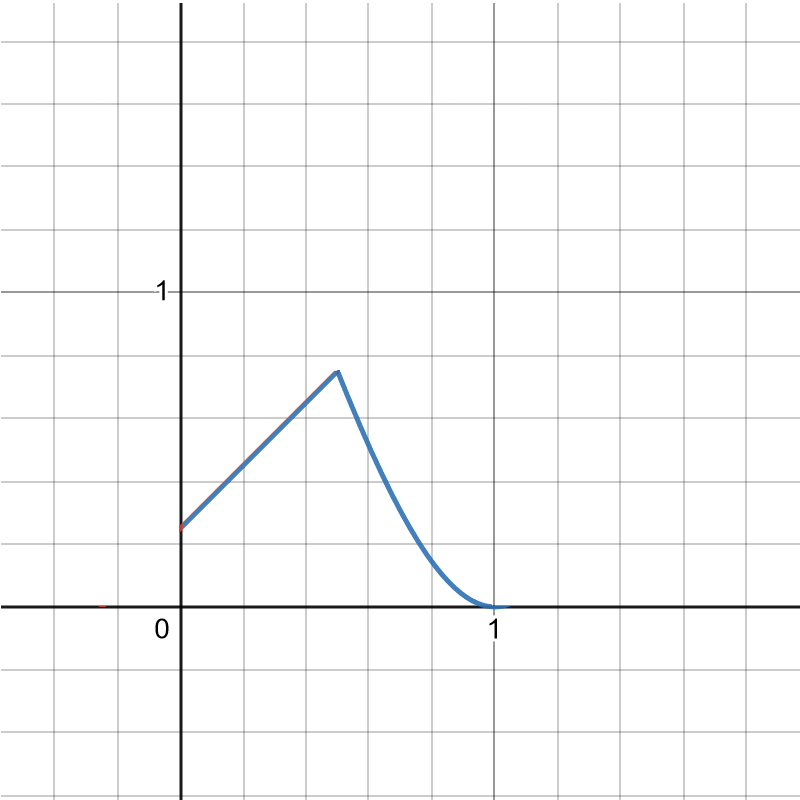

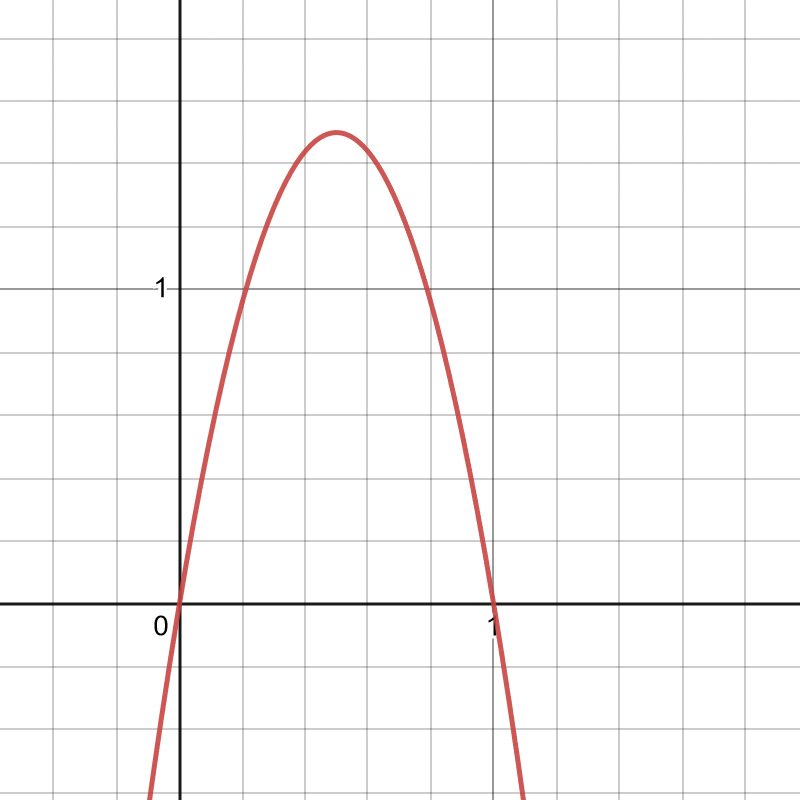

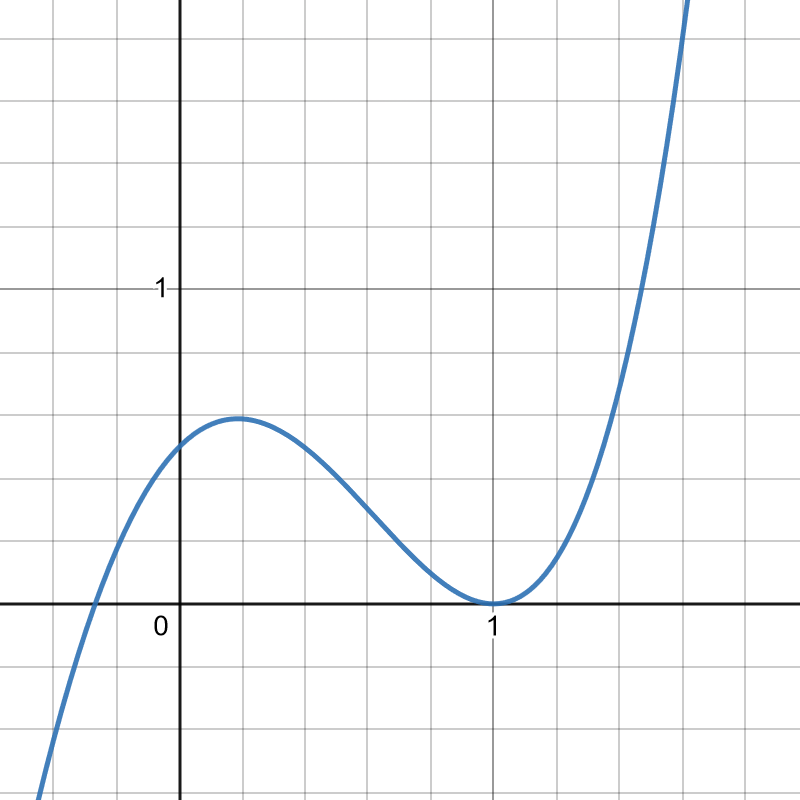

I often construct formal decision models to help me make big choices—generally it’s a linear model, where I simply rate each option by its relative quality in a particular dimension, then try different weightings of all the different dimensions. I’ve used this successfully to pick out cars, laptops, even universities. I’m not entrusting my decisions to an algorithm; I often find myself tweaking the parameters to try to get a particular result—but that in itself tells me what I really want, deep down. (Don’t do that in research—people do, and it’s bad—but if the goal is to make yourself happy, your gut feelings are important too.)

My decision models consistently rank university teaching quite high. It generally only gets beaten by freelance writing—which means that maybe I should give freelance writing another try after all.

And yet, my actual experience at Edinburgh was miserable.

What went wrong?

Well, first of all, I should acknowledge that when I separate out the job “university professor” into teaching and research as separate jobs in my decision model, and include all that goes into both jobs—not just the actual teaching, but the grading and administrative tasks; not just doing the research, but also trying to fund and publish it—they both drop lower on the list, and research drops down a lot.

Also, I would rate them both even lower now, having more direct experience of just how awful the exam-grading, grant-writing and journal-submitting can be.

Designing and then grading an exam was tremendously stressful: I knew that many of my students’ futures rested on how they did on exams like this (especially in the UK system, where exams are absurdly overweighted! In most of my classes, the final exam was at least 60% of the grade!). I struggled mightily to make the exam as fair as I could, all the while knowing that it would never really feel fair and I didn’t even have the time to make it the best it could be. You really can’t assess how well someone understands an entire subject in a multiple-choice exam designed to take 90 minutes. It’s impossible.

The worst part of research for me was the rejection.

I mentioned in a previous post how I am hypersensitive to rejection; applying for grants and submitting to journals was clearly the worst feelings of rejection I’ve felt in any job. It felt like they were evaluting not only the value of my work, but my worth as a scientist. Failure felt like being told that my entire career was a waste of time.

It was even worse than the feeling of rejection in freelance writing (which is one of the few things that my model tells me is bad about freelancing as a career for me, along with relatively low and uncertain income). I think the difference is that a book publisher is saying “We don’t think we can sell it.”—’we’ and ‘sell’ being vital. They aren’t saying “this is a bad book; it shouldn’t exist; writing it was a waste of time.”; they’re just saying “It’s not a subgenre we generally work with.” or “We don’t think it’s what the market wants right now.” or even “I personally don’t care for it.”. They acknowledge their own subjective perspective and the fact that it’s ultimately dependent on forecasting the whims of an extremely fickle marketplace. They aren’t really judging my book, and they certainly aren’t judging me.

But in research publishing, it was different. Yes, it’s all in very polite language, thoroughly spiced with sophisticated jargon (though some reviewers are more tactful than others). But when your grant application gets rejected by a funding agency or your paper gets rejected by a journal, the sense really basically is “This project is not worth doing.”; “This isn’t good science.”; “It was/would be a waste of time and money.”; “This (theory or experiment you’ve spent years working on) isn’t interesting or important.” Nobody ever came out and said those things, nor did they come out and say “You’re a bad economist and you should feel bad.”; but honestly a couple of the reviews did kinda read to me like they wanted to say that. They thought that the whole idea that human beings care about each other is fundamentally stupid and naive and not worth talking about, much less running experiments on.

It isn’t so much that I believed them that my work was bad science. I did make some mistakes along the way (but nothing vital; I’ve seen far worse errors by Nobel Laureates). I didn’t have very large samples (because every person I add to the experiment is money I have to pay, and therefore funding I have to come up with). But overall I do believe that my work is sufficiently rigorous to be worth publishing in scientific journals.

It’s more that I came to feel that my work is considered bad, that the kind of work I wanted to do would forever be an uphill battle against an implacable enemy. I already feel exhausted by that battle, and it had only barely begun. I had thought that behavioral economics was a more successful paradigm by now, that it had largely displaced the neoclassical assumptions that came before it; but I was wrong. Except specifically in journals dedicated to experimental and behavioral economics (of which prestigious journals are few—I quickly exhausted them), it really felt like a lot of the feedback I was getting amounted to, “I refuse to believe your paradigm.”.

Part of the problem, also, was that there simply aren’t that many prestigious journals, and they don’t take that many papers. The top 5 journals—which, for whatever reason, command far more respect than any other journals among economists—each accept only about 5-10% of their submissions. Surely more than that are worth publishing; and, to be fair, much of what they reject probably gets published later somewhere else. But it makes a shockingly large difference in your career how many “top 5s” you have; other publications almost don’t matter at all. So once you don’t get into any of those (which of course I didn’t), should you even bother trying to publish somewhere else?

And what else almost doesn’t matter? Your teaching. As long as you show up to class and grade your exams on time (and don’t, like, break the law or something), research universities basically don’t seem to care how good a teacher you are. That was certainly my experience at Edinburgh. (Honestly even their responses to professors sexually abusing their students are pretty unimpressive.)

Some of the other faculty cared, I could tell; there were even some attempts to build a community of colleagues to support each other in improving teaching. But the administration seemed almost actively opposed to it; they didn’t offer any funding to support the program—they wouldn’t even buy us pizza at the meetings, the sort of thing I had as an undergrad for my activist groups—and they wanted to take the time we spent in such pedagogy meetings out of our grading time (probably because if they didn’t, they’d either have to give us less grading, or some of us would be over our allotted hours and they’d owe us compensation).

And honestly, it is teaching that I consider the higher calling.

The difference between 0 people knowing something and 1 knowing it is called research; the difference between 1 person knowing it and 8 billion knowing it is called education.

Yes, of course, research is important. But if all the research suddenly stopped, our civilization would stagnate at its current level of technology, but otherwise continue unimpaired. (Frankly it might spare us the cyberpunk dystopia/AI apocalypse we seem to be hurtling rapidly toward.) Whereas if all education suddenly stopped, our civilization would slowly decline until it ultimately collapsed into the Stone Age. (Actually it might even be worse than that; even Stone Age cultures pass on knowledge to their children, just not through formal teaching. If you include all the ways parents teach their children, it may be literally true that humans cannot survive without education.)

Yet research universities seem to get all of their prestige from their research, not their teaching, and prestige is the thing they absolutely value above all else, so they devote the vast majority of their energy toward valuing and supporting research rather than teaching. In many ways, the administrators seem to see teaching as an obligation, as something they have to do in order to make money that they can spend on what they really care about, which is research.

As such, they are always making classes bigger and bigger, trying to squeeze out more tuition dollars (well, in this case, pounds) from the same number of faculty contact hours. It becomes impossible to get to know all of your students, much less give them all sufficient individual attention. At Edinburgh they even had the gall to refer to their seminars as “tutorials” when they typically had 20+ students. (That is not tutoring!)And then of course there were the lectures, which often had over 200 students.

I suppose it could be worse: It could be athletics they spend all their money on, like most Big Ten universities. (The University of Michigan actually seems to strike a pretty good balance: they are certainly not hurting for athletic funding, but they also devote sizeable chunks of their budget to research, medicine, and yes, even teaching. And unlike virtually all other varsity athletic programs, University of Michigan athletics turns a profit!)

If all the varsity athletics in the world suddenly disappeared… I’m not convinced we’d be any worse off, actually. We’d lose a source of entertainment, but it could probably be easily replaced by, say, Netflix. And universities could re-focus their efforts on academics, instead of acting like a free training and selection system for the pro leagues. The University of California, Irvine certainly seemed no worse off for its lack of varsity football. (Though I admit it felt a bit strange, even to a consummate nerd like me, to have a varsity League of Legends team.)

They keep making the experience of teaching worse and worse, even as they cut faculty salaries and make our jobs more and more precarious.

That might be what really made me most miserable, knowing how expendable I was to the university. If I hadn’t quit when I did, I would have been out after another semester anyway, and going through this same process a bit later. It wasn’t even that I was denied tenure; it was never on the table in the first place. And perhaps because they knew I wouldn’t stay anyway, they didn’t invest anything in mentoring or supporting me. Ostensibly I was supposed to be assigned a faculty mentor immediately; I know the first semester was crazy because of COVID, but after two and a half years I still didn’t have one. (I had a small research budget, which they reduced in the second year; that was about all the support I got. I used it—once.)

So if I do continue on that “academia” road, I’m going to need to do a lot of things differently. I’m not going to put up with a lot of things that I did. I’ll demand a long-term position—if not tenure-track, at least renewable indefinitely, like a lecturer position (as it is in the US, where the tenure-track position is called “assistant professor” and “lecturer” is permanent but not tenured; in the UK, “lecturers” are tenure-track—except at Oxford, and as of 2021, Cambridge—just to confuse you). Above all, I’ll only be applying to schools that actually have some track record for valuing teaching and supporting their faculty.

And if I can’t find any such positions? Then I just won’t apply at all. I’m not going in with the “I’ll take what I can get” mentality I had last time. Our household finances are stable enough that I can afford to wait awhile.

But maybe I won’t even do that. Maybe I’ll take a different path entirely.

For now, I just don’t know.