May 19 2458623

What do the following statements have in common?

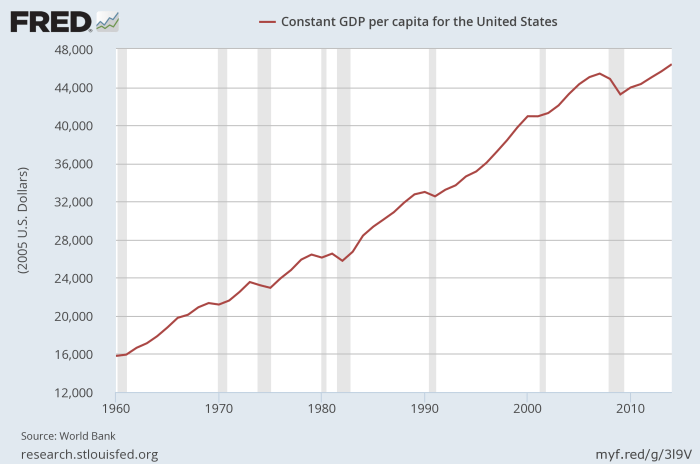

1. “Capitalist countries have less poverty than Communist countries.”

2. “Black men in the US commit homicide at a higher rate than White men.”

4. “Men on average perform better at visual tasks, and women on average perform better on verbal tasks.”

5. “In the United States, White men are no more likely to be mass shooters than other men.”

6. “The genetic heritability of intelligence is about 60%.”

7. “The plurality of recent terrorist attacks in the US have been committed by Muslims.”

8. “The period of US military hegemony since 1945 has been the most peaceful period in human history.”

These statements have two things in common:

1. All of these statements are objectively true facts that can be verified by rich and reliable empirical data which is publicly available and uncontroversially accepted by social scientists.

2. If spoken publicly among left-wing social justice activists, all of these statements will draw resistance, defensiveness, and often outright hostility. Anyone making these statements is likely to be accused of racism, sexism, imperialism, and so on.

I call such propositions Pinker Propositions, after an excellent talk by Steven Pinker illustrating several of the above statements (which was then taken wildly out of context by social justice activists on social media).

The usual reaction to these statements suggests that people think they imply harmful far-right policy conclusions. This inference is utterly wrong: A nuanced understanding of each of these propositions does not in any way lead to far-right policy conclusions—in fact, some rather strongly support left-wing policy conclusions.

1. Capitalist countries have less poverty than Communist countries, because Communist countries are nearly always corrupt and authoritarian. Social democratic countries have the lowest poverty and the highest overall happiness (#ScandinaviaIsBetter).

2. Black men commit more homicide than White men because of poverty, discrimination, mass incarceration, and gang violence. Black men are also greatly overrepresented among victims of homicide, as most homicide is intra-racial. Homicide rates often vary across ethnic and socioeconomic groups, and these rates vary over time as a result of cultural and political changes.

3. IQ tests are a highly imperfect measure of intelligence, and the genetics of intelligence cut across our socially-constructed concept of race. There is far more within-group variation in IQ than between-group variation. Intelligence is not fixed at birth but is affected by nutrition, upbringing, exposure to toxins, and education—all of which statistically put Black people at a disadvantage. Nor does intelligence remain constant within populations: The Flynn Effect is the well-documented increase in intelligence which has occurred in almost every country over the past century. Far from justifying discrimination, these provide very strong reasons to improve opportunities for Black children. The lead and mercury in Flint’s water suppressed the brain development of thousands of Black children—that’s going to lower average IQ scores. But that says nothing about supposed “inherent racial differences” and everything about the catastrophic damage of environmental racism.

4. To be quite honest, I never even understood why this one shocks—or even surprises—people. It’s not even saying that men are “smarter” than women—overall IQ is almost identical. It’s just saying that men are more visual and women are more verbal. And this, I think, is actually quite obvious. I think the clearest evidence of this—the “interocular trauma” that will convince you the effect is real and worth talking about—is pornography. Visual porn is overwhelmingly consumed by men, even when it was designed for women (e.g. Playgirl—a majority of its readers are gay men, even though there are ten times as many straight women in the world as there are gay men). Conversely, erotic novels are overwhelmingly consumed by women. I think a lot of anti-porn feminism can actually be explained by this effect: Feminists (who are usually women, for obvious reasons) can say they are against “porn” when what they are really against is visual porn, because visual porn is consumed by men; then the kind of porn that they like (erotic literature) doesn’t count as “real porn”. And honestly they’re mostly against the current structure of the live-action visual porn industry, which is totally reasonable—but it’s a far cry from being against porn in general. I have some serious issues with how our farming system is currently set up, but I’m not against farming.

5. This one is interesting, because it’s a lack of a race difference, which normally is what the left wing always wants to hear. The difference of course is that this alleged difference would make White men look bad, and that’s apparently seen as a desirable goal for social justice. But the data just doesn’t bear it out: While indeed most mass shooters are White men, that’s because most Americans are White, which is a totally uninteresting reason. There’s no clear evidence of any racial disparity in mass shootings—though the gender disparity is absolutely overwhelming: It’s almost always men.

6. Heritability is a subtle concept; it doesn’t mean what most people seem to think it means. It doesn’t mean that 60% of your intelligence is due to your your genes. Indeed, I’m not even sure what that sentence would actually mean; it’s like saying that 60% of the flavor of a cake is due to the eggs. What this heritability figure actually means that when you compare across individuals in a population, and carefully control for environmental influences, you find that about 60% of the variance in IQ scores is explained by genetic factors. But this is within a particular population—here, US adults—and is absolutely dependent on all sorts of other variables. The more flexible one’s environment becomes, the more people self-select into their preferred environment, and the more heritable traits become. As a result, IQ actually becomes more heritable as children become adults, called the Wilson Effect.

7. This one might actually have some contradiction with left-wing policy. The disproportionate participation of Muslims in terrorism—controlling for just about anything you like, income, education, age etc.—really does suggest that, at least at this point in history, there is some real ideological link between Islam and terrorism. But the fact remains that the vast majority of Muslims are not terrorists and do not support terrorism, and antagonizing all the people of an entire religion is fundamentally unjust as well as likely to backfire in various ways. We should instead be trying to encourage the spread of more tolerant forms of Islam, and maintaining the strict boundaries of secularism to prevent the encroach of any religion on our system of government.

8. The fact that US military hegemony does seem to be a cause of global peace doesn’t imply that every single military intervention by the US is justified. In fact, it doesn’t even necessarily imply that any such interventions are justified—though I think one would be hard-pressed to say that the NATO intervention in the Kosovo War or the defense of Kuwait in the Gulf War was unjustified. It merely points out that having a hegemon is clearly preferable to having a multipolar world where many countries jockey for military supremacy. The Pax Romana was a time of peace but also authoritarianism; the Pax Americana is better, but that doesn’t prevent us from criticizing the real harms—including major war crimes—committed by the United States.

So it is entirely possible to know and understand these facts without adopting far-right political views.

Yet Pinker’s point—and mine—is that by suppressing these true facts, by responding with hostility or even ostracism to anyone who states them, we are actually adding fuel to the far-right fire. Instead of presenting the nuanced truth and explaining why it doesn’t imply such radical policies, we attack the messenger; and this leads people to conclude three things:

1. The left wing is willing to lie and suppress the truth in order to achieve political goals (they’re doing it right now).

2. These statements actually do imply right-wing conclusions (else why suppress them?).

3. Since these statements are true, that must mean the right-wing conclusions are actually correct.

Now (especially if you are someone who identifies unironically as “woke”), you might be thinking something like this: “Anyone who can be turned away from social justice so easily was never a real ally in the first place!”

This is a fundamentally and dangerously wrongheaded view. No one—not me, not you, not anyone—was born believing in social justice. You did not emerge from your mother’s womb ranting against colonalist imperialism. You had to learn what you now know. You came to believe what you now believe, after once believing something else that you now think is wrong. This is true of absolutely everyone everywhere. Indeed, the better you are, the more true it is; good people learn from their mistakes and grow in their knowledge.

This means that anyone who is now an ally of social justice once was not. And that, in turn, suggests that many people who are currently not allies could become so, under the right circumstances. They would probably not shift all at once—as I didn’t, and I doubt you did either—but if we are welcoming and open and honest with them, we can gradually tilt them toward greater and greater levels of support.

But if we reject them immediately for being impure, they never get the chance to learn, and we never get the chance to sway them. People who are currently uncertain of their political beliefs will become our enemies because we made them our enemies. We declared that if they would not immediately commit to everything we believe, then they may as well oppose us. They, quite reasonably unwilling to commit to a detailed political agenda they didn’t understand, decided that it would be easiest to simply oppose us.

And we don’t have to win over every person on every single issue. We merely need to win over a large enough critical mass on each issue to shift policies and cultural norms. Building a wider tent is not compromising on your principles; on the contrary, it’s how you actually win and make those principles a reality.

There will always be those we cannot convince, of course. And I admit, there is something deeply irrational about going from “those leftists attacked Charles Murray” to “I think I’ll start waving a swastika”. But humans aren’t always rational; we know this. You can lament this, complain about it, yell at people for being so irrational all you like—it won’t actually make people any more rational. Humans are tribal; we think in terms of teams. We need to make our team as large and welcoming as possible, and suppressing Pinker Propositions is not the way to do that.