Apr 2 JDN 2460037

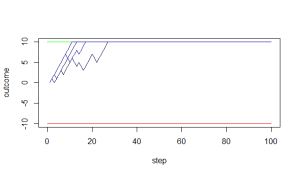

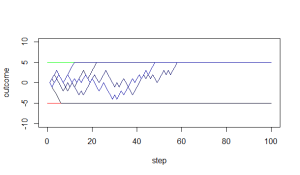

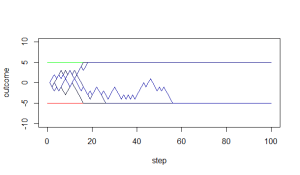

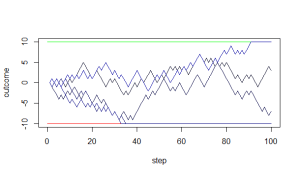

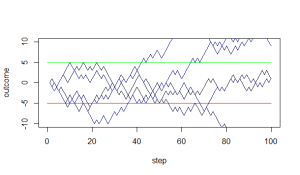

A couple weeks ago I presented my stochastic overload model, which posits a neurological mechanism for the Yerkes-Dodson effect: Stress increases sympathetic activation, and this increases performance, up to the point where it starts to risk causing neural pathways to overload and shut down.

This week I thought I’d try to get into some of the implications of this model, how it might be applied to make predictions or guide policy.

One thing I often struggle with when it comes to applying theory is what actual benefits we get from a quantitative mathematical model as opposed to simply a basic qualitative idea. In many ways I think these benefits are overrated; people seem to think that putting something into an equation automatically makes it true and useful. I am sometimes tempted to try to take advantage of this, to put things into equations even though I know there is no good reason to put them into equations, simply because so many people seem to find equations so persuasive for some reason. (Studies have even shown that, particularly in disciplines that don’t use a lot of math, inserting a totally irrelevant equation into a paper makes it more likely to be accepted.)

The basic implications of the Yerkes-Dodson effect are already widely known, and utterly ignored in our society. We know that excessive stress is harmful to health and performance, and yet our entire economy seems to be based around maximizing the amount of stress that workers experience. I actually think neoclassical economics bears a lot of the blame for this, as neoclassical economists are constantly talking about “increasing work incentives”—which is to say, making work life more and more stressful. (And let me remind you that there has never been any shortage of people willing to work in my lifetime, except possibly briefly during the COVID pandemic. The shortage has always been employers willing to hire them.)

I don’t know if my model can do anything to change that. Maybe by putting it into an equation I can make people pay more attention to it, precisely because equations have this weird persuasive power over most people.

As far as scientific benefits, I think that the chief advantage of a mathematical model lies in its ability to make quantitative predictions. It’s one thing to say that performance increases with low levels of stress then decreases with high levels; but it would be a lot more useful if we could actually precisely quantify how much stress is optimal for a given person and how they are likely to perform at different levels of stress.

Unfortunately, the stochastic overload model can only make detailed predictions if you have fully specified the probability distribution of innate activation, which requires a lot of free parameters. This is especially problematic if you don’t even know what type of distribution to use, which we really don’t; I picked three classes of distribution because they were plausible and tractable, not because I had any particular evidence for them.

Also, we don’t even have standard units of measurement for stress; we have a vague notion of what more or less stressed looks like, but we don’t have the sort of quantitative measure that could be plugged into a mathematical model. Probably the best units to use would be something like blood cortisol levels, but then we’d need to go measure those all the time, which raises its own issues. And maybe people don’t even respond to cortisol in the same ways? But at least we could measure your baseline cortisol for awhile to get a prior distribution, and then see how different incentives increase your cortisol levels; and then the model should give relatively precise predictions about how this will affect your overall performance. (This is a very neuroeconomic approach.)

So, for now, I’m not really sure how useful the stochastic overload model is. This is honestly something I feel about a lot of the theoretical ideas I have come up with; they often seem too abstract to be usefully applicable to anything.

Maybe that’s how all theory begins, and applications only appear later? But that doesn’t seem to be how people expect me to talk about it whenever I have to present my work or submit it for publication. They seem to want to know what it’s good for, right now, and I never have a good answer to give them. Do other researchers have such answers? Do they simply pretend to?

Along similar lines, I recently had one of my students ask about a theory paper I wrote on international conflict for my dissertation, and after sending him a copy, I re-read the paper. There are so many pages of equations, and while I am confident that the mathematical logic is valid,I honestly don’t know if most of them are really useful for anything. (I don’t think I really believe that GDP is produced by a Cobb-Douglas production function, and we don’t even really know how to measure capital precisely enough to say.) The central insight of the paper, which I think is really important but other people don’t seem to care about, is a qualitative one: International treaties and norms provide an equilibrium selection mechanism in iterated games. The realists are right that this is cheap talk. The liberals are right that it works. Because when there are many equilibria, cheap talk works.

I know that in truth, science proceeds in tiny steps, building a wall brick by brick, never sure exactly how many bricks it will take to finish the edifice. It’s impossible to see whether your work will be an irrelevant footnote or the linchpin for a major discovery. But that isn’t how the institutions of science are set up. That isn’t how the incentives of academia work. You’re not supposed to say that this may or may not be correct and is probably some small incremental progress the ultimate impact of which no one can possibly foresee. You’re supposed to sell your work—justify how it’s definitely true and why it’s important and how it has impact. You’re supposed to convince other people why they should care about it and not all the dozens of other probably equally-valid projects being done by other researchers.

I don’t know how to do that, and it is agonizing to even try. It feels like lying. It feels like betraying my identity. Being good at selling isn’t just orthogonal to doing good science—I think it’s opposite. I think the better you are at selling your work, the worse you are at cultivating the intellectual humility necessary to do good science. If you think you know all the answers, you’re just bad at admitting when you don’t know things. It feels like in order to succeed in academia, I have to act like an unscientific charlatan.

Honestly, why do we even need to convince you that our work is more important than someone else’s? Are there only so many science points to go around? Maybe the whole problem is this scarcity mindset. Yes, grant funding is limited; but why does publishing my work prevent you from publishing someone else’s? Why do you have to reject 95% of the papers that get sent to you? Don’t tell me you’re limited by space; the journals are digital and searchable and nobody reads the whole thing anyway. Editorial time isn’t infinite, but most of the work has already been done by the time you get a paper back from peer review. Of course, I know the real reason: Excluding people is the main source of prestige.