Nov 17 JDN 2458805

I said in last week’s post that Pascal’s Mugging provides some deep insights into both Singularitarianism and religion. In particular, it explains why Singularitarianism seems so much like a religion.

This has been previously remarked, of course. I think Eric Steinhart makes the best case for Singularitarianism as a religion:

I think singularitarianism is a new religious movement. I might add that I think Clifford Geertz had a pretty nice (though very abstract) definition of religion. And I think singularitarianism fits Geertz’s definition (but that’s for another time).

My main interest is this: if singularitarianism is a new religious movement, then what should we make of it? Will it mainly be a good thing? A kind of enlightenment religion? It might be an excellent alternative to old-fashioned Abrahamic religion. Or would it degenerate into the well-known tragic pattern of coercive authority? Time will tell; but I think it’s worth thinking about this in much more detail.

To be clear: Singularitarianism is probably not a religion. It is certainly not a cult, as it has been even worse accused; the behaviors it prescribes are largely normative, pro-social behaviors, and therefore it would at worst be a mainstream religion. Really, if every religion only inspired people to do things like donate to famine relief and work on AI research (as opposed to, say, beheading gay people), I wouldn’t have much of a problem with religion.

In fact, Singularitarianism has one vital advantage over religion: Evidence. While the evidence in favor of it is not overwhelming, there is enough evidential support to lend plausibility to at least a broad concept of Singularitarianism: Technology will continue rapidly advancing, achieving accomplishments currently only in our wildest imaginings; artificial intelligence surpassing human intelligence will arise, sooner than many people think; human beings will change ourselves into something new and broadly superior; these posthumans will go on to colonize the galaxy and build a grander civilization than we can imagine. I don’t know that these things are true, but I hope they are, and I think it’s at least reasonably likely. All I’m really doing is extrapolating based on what human civilization has done so far and what we are currently trying to do now. Of course, we could well blow ourselves up before then, or regress to a lower level of technology, or be wiped out by some external force. But there’s at least a decent chance that we will continue to thrive for another million years to come.

But yes, Singularitarianism does in many ways resemble a religion: It offers a rich, emotionally fulfilling ontology combined with ethical prescriptions that require particular behaviors. It promises us a chance at immortality. It inspires us to work toward something much larger than ourselves. More importantly, it makes us special—we are among the unique few (millions?) who have the power to influence the direction of human and posthuman civilization for a million years. The stronger forms of Singularitarianism even have a flavor of apocalypse: When the AI comes, sooner than you think, it will immediately reshape everything at effectively infinite speed, so that from one year—or even one moment—to the next, our whole civilization will be changed. (These forms of Singularitarianism are substantially less plausible than the broader concept I outlined above.)

It’s this sense of specialness that Pascal’s Mugging provides some insight into. When it is suggested that we are so special, we should be inherently skeptical, not least because it feels good to hear that. (As Less Wrong would put it, we need to avoid a Happy Death Spiral.) Human beings like to feel special; we want to feel special. Our brains are configured to seek out evidence that we are special and reject evidence that we are not. This is true even to the point of absurdity: One cannot be mathematically coherent without admitting that the compliment “You’re one in a million.” is equivalent to the statement “There are seven thousand people as good or better than you.”—and yet, the latter seems much worse, because it does not make us sound special.

Indeed, the connection between Pascal’s Mugging and Pascal’s Wager is quite deep: Each argument takes a tiny probability and multiplies it by a huge impact in order to get a large expected utility. This often seems to be the way that religions defend themselves: Well, yes, the probability is small; but can you take the chance? Can you afford to take that bet if it’s really your immortal soul on the line?

And Singularitarianism has a similar case to make, even aside from the paradox of Pascal’s Mugging itself. The chief argument for why we should be focusing all of our time and energy on existential risk is that the potential payoff is just so huge that even a tiny probability of making a difference is enough to make it the only thing that matters. We should be especially suspicious of that; anything that says it is the only thing that matters is to be doubted with utmost care. The really dangerous religion has always been the fanatical kind that says it is the only thing that matters. That’s the kind of religion that makes you crash airliners into buildings.

I think some people may well have become Singularitarians because it made them feel special. It is exhilarating to be one of these lone few—and in the scheme of things, even a few million is a small fraction of all past and future humanity—with the power to effect some shift, however small, in the probability of a far grander, far brighter future.

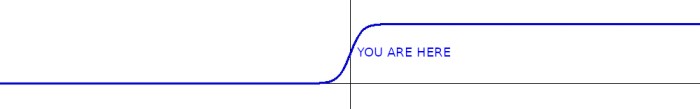

Yet, in fact this is very likely the circumstance in which we are. We could have been born in the Neolithic, struggling to survive, utterly unaware of what would come a few millennia hence; we could have been born in the posthuman era, one of a trillion other artist/gamer/philosophers living in a world where all the hard work that needed to be done is already done. In the long S-curve of human development, we could have been born in the flat part on the left or the flat part on the right—and by all probability, we should have been; most people were. But instead we happened to be born in that tiny middle slice, where the curve slopes upward at its fastest. I suppose somebody had to be, and it might as well be us.

A priori, we should doubt that we were born so special. And when forming our beliefs, we should compensate for the fact that we want to believe we are special. But we do in fact have evidence, lots of evidence. We live in a time of astonishing scientific and technological progress.

My lifetime has included the progression from Deep Thought first beating David Levy to the creation of a computer one millimeter across that runs on a few nanowatts and nevertheless has ten times as much computing power as the 80-pound computer that ran the Saturn V. (The human brain runs on about 100 watts, and has a processing power of about 1 petaflop, so we can say that our energy efficiency is about 10 TFLOPS/W. The M3 runs on about 10 nanowatts and has a processing power of about 0.1 megaflops, so its energy efficiency is also about 10 TFLOPS/W. We did it! We finally made a computer as energy-efficient as the human brain! But we have still not matched the brain in terms of space-efficiency: The volume of the human brain is about 1000 cm^3, so our space efficiency is about 1 TFLOPS/cm^3. The volume of the M3 is about 1 mm^3, so its space efficiency is only about 100 MFLOPS/cm^3. The brain still wins by a factor of 10,000.)

My mother saw us go from the first jet airliners to landing on the Moon to the International Space Station and robots on Mars. She grew up before the polio vaccine and is still alive to see the first 3D-printed human heart. When I was a child, smartphones didn’t even exist; now more people have smartphones than have toilets. I may yet live to see the first human beings set foot on Mars. The pace of change is utterly staggering.

Without a doubt, this is sufficient evidence to believe that we, as a civilization, are living in a very special time. The real question is: Are we, as individuals, special enough to make a difference? And if we are, what weight of responsibility does this put upon us?

If you are reading this, odds are the answer to the first question is yes: You are definitely literate, and most likely educated, probably middle- or upper-middle-class in a First World country. Countries are something I can track, and I do get some readers from non-First-World countries; and of course I don’t observe your education or socioeconomic status. But at an educated guess, this is surely my primary reading demographic. Even if you don’t have the faintest idea what I’m talking about when I use Bayesian logic or calculus, you’re already quite exceptional. (And if you do? All the more so.)

That means the second question must apply: What do we owe these future generations who may come to exist if we play our cards right? What can we, as individuals, hope to do to bring about this brighter future?

The Singularitarian community will generally tell you that the best thing to do with your time is to work on AI research, or, failing that, the best thing to do with your money is to give it to people working on artificial intelligence research. I’m not going to tell you not to work on AI research or donate to AI research, as I do think it is among the most important things humanity needs to be doing right now, but I’m also not going to tell you that it is the one single thing you must be doing.

You should almost certainly be donating somewhere, but I’m not so sure it should be to AI research. Maybe it should be famine relief, or malaria prevention, or medical research, or human rights, or environmental sustainability. If you’re in the United States (as I know most of you are), the best thing to do with your money may well be to support political campaigns, because US political, economic, and military hegemony means that as goes America, so goes the world. Stop and think for a moment how different the prospects of global warming might have been—how many millions of lives might have been saved!—if Al Gore had become President in 2001. For lack of a few million dollars in Tampa twenty years ago, Miami may be gone in fifty. If you’re not sure which cause is most important, just pick one; or better yet, donate to a diversified portfolio of charities and political campaigns. Diversified investment isn’t just about monetary return.

And you should think carefully about what you’re doing with the rest of your life. This can be hard to do; we can easily get so caught up in just getting through the day, getting through the week, just getting by, that we lose sight of having a broader mission in life. Of course, I don’t know what your situation is; it’s possible things really are so desperate for you that you have no choice but to keep your head down and muddle through. But you should also consider the possibility that this is not the case: You may not be as desperate as you feel. You may have more options than you know. Most “starving artists” don’t actually starve. More people regret staying in their dead-end jobs than regret quitting to follow their dreams. I guess if you stay in a high-paying job in order to earn to give, that might really be ethically optimal; but I doubt it will make you happy. And in fact some of the most important fields are constrained by a lack of good people doing good work, and not by a simple lack of funding.

I see this especially in economics: As a field, economics is really not focused on the right kind of questions. There’s far too much prestige for incrementally adjusting some overcomplicated unfalsifiable mess of macroeconomic algebra, and not nearly enough for trying to figure out how to mitigate global warming, how to turn back the tide of rising wealth inequality, or what happens to human society once robots take all the middle-class jobs. Good work is being done in devising measures to fight poverty directly, but not in devising means to undermine the authoritarian regimes that are responsible for maintaining poverty. Formal mathematical sophistication is prized, and deep thought about hard questions is eschewed. We are carefully arranging the pebbles on our sandcastle in front of the oncoming tidal wave. I won’t tell you that it’s easy to change this—it certainly hasn’t been easy for me—but I have to imagine it’d be easier with more of us trying rather than with fewer. Nobody needs to donate money to economics departments, but we definitely do need better economists running those departments.

You should ask yourself what it is that you are really good at, what you—you yourself, not anyone else—might do to make a mark on the world. This is not an easy question: I have not quite answered for myself whether I would make more difference as an academic researcher, a policy analyst, a nonfiction author, or even a science fiction author. (If you scoff at the latter: Who would have any concept of AI, space colonization, or transhumanism, if not for science fiction authors? The people who most tilted the dial of human civilization toward this brighter future may well be Clarke, Roddenberry, and Asimov.) It is not impossible to be some combination or even all of these, but the more I try to take on the more difficult my life becomes.

Your own path will look different than mine, different, indeed, than anyone else’s. But you must choose it wisely. For we are very special individuals, living in a very special time.