JDN 2457096 EDT 16:08

Despite my preference to use the Julian Date Number system, it has not escaped my attention that this weekend was Pi Day of the Century, 3/14/15. Yesterday morning we had the Moment of Pi: 3/14/15 9:26:53.58979… We arguably got an encore that evening if we allow 9:00 PM instead of 21:00.

Though perhaps it is a stereotype and/or cheesy segue, pi and associated mathematical concepts are often associated with computers and robots. Robots are an increasing part of our lives, from the industrial robots that manufacture our cars to the precision-timed satellites that provide our GPS navigation. When you want to know how to get somewhere, you pull out your pocket thinking machine and ask it to commune with the space robots who will guide you to your destination.

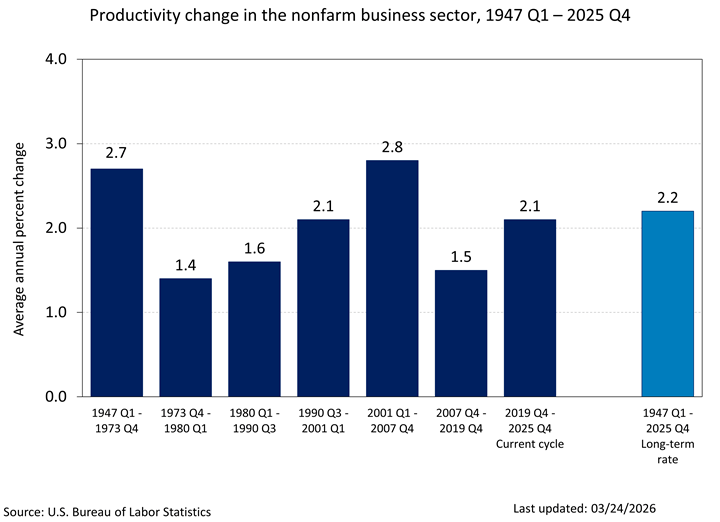

There are obvious upsides to these robots—they are enormously productive, and allow us to produce great quantities of useful goods at astonishingly low prices, including computers themselves, creating a positive feedback loop that has literally lowered the price of a given amount of computing power by a factor of one trillion in the latter half of the 20th century. We now very much live in the early parts of a cyberpunk future, and it is due almost entirely to the power of computer automation.

But if you know your SF you may also remember another major part of cyberpunk futures aside from their amazing technology; they also tend to be dystopias, largely because of their enormous inequality. In the cyberpunk future corporations own everything, governments are virtually irrelevant, and most individuals can barely scrape by—and that sounds all too familiar, doesn’t it? This isn’t just something SF authors made up; there really are a number of ways that computer technology can exacerbate inequality and give more power to corporations.

Why? The reason that seems to get the most attention among economists is skill-biased technological change; that’s weird because it’s almost certainly the least important. The idea is that computers can automate many routine tasks (no one disputes that part) and that routine tasks tend to be the sort of thing that uneducated workers generally do more often than educated ones (already this is looking fishy; think about accountants versus artists). But educated workers are better at using computers and the computers need people to operate them (clearly true). Hence while uneducated workers are substitutes for computers—you can use the computers instead—educated workers are complements for computers—you need programmers and engineers to make the computers work. As computers get cheaper, their substitutes also get cheaper—and thus wages for uneducated workers go down. But their complements get more valuable—and so wages for educated workers go up. Thus, we get more inequality, as high wages get higher and low wages get lower.

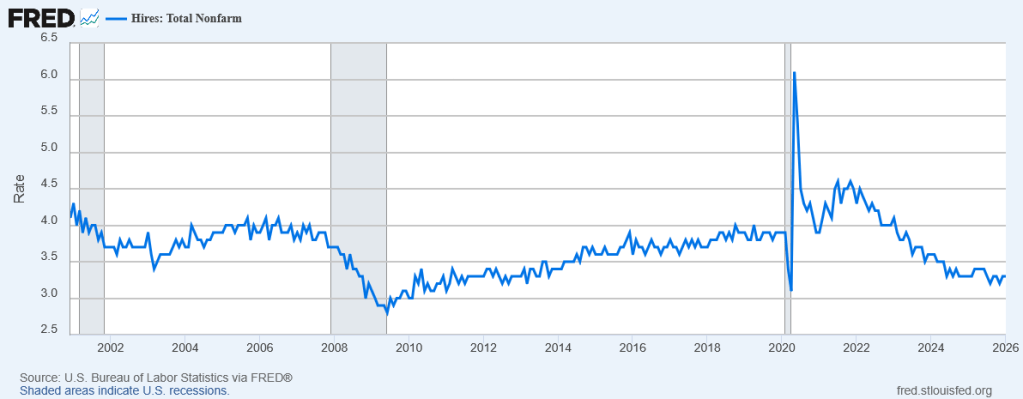

Or, to put it more succinctly, robots are taking our jobs. Not all our jobs—actually they’re creating jobs at the top for software programmers and electrical engineers—but a lot of our jobs, like welders and metallurgists and even nurses. As the technology improves more and more jobs will be replaced by automation.

The theory seems plausible enough—and in some form is almost certainly true—but as David Card has pointed out, this fails to explain most of the actual variation in inequality in the US and other countries. Card is one of my favorite economists; he is also famous for completely revolutionizing the economics of minimum wage, showing that prevailing theory that minimum wages must hurt employment simply doesn’t match the empirical data.

If it were just that college education is getting more valuable, we’d see a rise in income for roughly the top 40%, since over 40% of American adults have at least an associate’s degree. But we don’t actually see that; in fact contrary to popular belief we don’t even really see it in the top 1%. The really huge increases in income for the last 40 years have been at the top 0.01%—the top 1% of 1%.

Many of the jobs that are now automated also haven’t seen a fall in income; despite the fact that high-frequency trading algorithms do what stockbrokers do a thousand times better (“better” at making markets more unstable and siphoning wealth from the rest of the economy that is), stockbrokers have seen no such loss in income. Indeed, they simply appropriate the additional income from those computer algorithms—which raises the question why welders couldn’t do the same thing. And indeed, I’ll get to in a moment why that is exactly what we must do, that the robot revolution must also come with a revolution in property rights and income distribution.

No, the real reasons why technology exacerbates inequality are twofold: Patent rents and the winner-takes-all effect.

In an earlier post I already talked about the winner-takes-all effect, so I’ll just briefly summarize it this time around. Under certain competitive conditions, a small fraction of individuals can reap a disproportionate share of the rewards despite being only slightly more productive than those beneath them. This often happens when we have network externalities, in which a product becomes more valuable when more people use it, thus creating a positive feedback loop that makes the products which are already successful wildly so and the products that aren’t successful resigned to obscurity.

Computer technology—more specifically, the Internet—is particularly good at creating such situations. Facebook, Google, and Amazon are all examples of companies that (1) could not exist without Internet technology and (2) depend almost entirely upon network externalities for their business model. They are the winners who take all; thousands of other software companies that were just as good or nearly so are now long forgotten. The winners are not always the same, because the system is unstable; for instance MySpace used to be much more important—and much more profitable—until Facebook came along.

But the fact that a different handful of upper-middle-class individuals can find themselves suddenly and inexplicably thrust into fame and fortune while the rest of us toil in obscurity really isn’t much comfort, now is it? While technically the rise and fall of MySpace can be called “income mobility”, it’s clearly not what we actually mean when we say we want a society with a high level of income mobility. We don’t want a society where the top 10% can by little more than chance find themselves becoming the top 0.01%; we want a society where you don’t have to be in the top 10% to live well in the first place.

Even without network externalities the Internet still nurtures winner-takes-all markets, because digital information can be copied infinitely. When it comes to sandwiches or even cars, each new one is costly to make and costly to transport; it can be more cost-effective to choose the ones that are made near you even if they are of slightly lower quality. But with books (especially e-books), video games, songs, or movies, each individual copy costs nothing to create, so why would you settle for anything but the best? This may well increase the overall quality of the content consumers get—but it also ensures that the creators of that content are in fierce winner-takes-all competition. Hence J.K. Rowling and James Cameron on the one hand, and millions of authors and independent filmmakers barely scraping by on the other. Compare a field like engineering; you probably don’t know a lot of rich and famous engineers (unless you count engineers who became CEOs like Bill Gates and Thomas Edison), but nor is there a large segment of “starving engineers” barely getting by. Though the richest engineers (CEOs excepted) are not nearly as rich as the richest authors, the typical engineer is much better off than the typical author, because engineering is not nearly as winner-takes-all.

But the main topic for today is actually patent rents. These are a greatly underappreciated segment of our economy, and they grow more important all the time. A patent rent is more or less what it sounds like; it’s the extra money you get from owning a patent on something. You can get that money either by literally renting it—charging license fees for other companies to use it—or simply by being the only company who is allowed to manufacture something, letting you sell it at monopoly prices. It’s surprisingly difficult to assess the real value of patent rents—there’s a whole literature on different econometric methods of trying to tackle this—but one thing is clear: Some of the largest, wealthiest corporations in the world are built almost entirely upon patent rents. Drug companies, R&D companies, software companies—even many manufacturing companies like Boeing and GM obtain a substantial portion of their income from patents.

What is a patent? It’s a rule that says you “own” an idea, and anyone else who wants to use it has to pay you for the privilege. The very concept of owning an idea should trouble you—ideas aren’t limited in number, you can easily share them with others. But now think about the fact that most of these patents are owned by corporations—not by inventors themselves—and you’ll realize that our system of property rights is built around the notion that an abstract entity can own an idea—that one idea can own another.

The rationale behind patents is that they are supposed to provide incentives for innovation—in exchange for investing the time and effort to invent something, you receive a certain amount of time where you get to monopolize that product so you can profit from it. But how long should we give you? And is this really the best way to incentivize innovation?

I contend it is not; when you look at the really important world-changing innovations, very few of them were done for patent rents, and virtually none of them were done by corporations. Jonas Salk was indignant at the suggestion he should patent the polio vaccine; it might have made him a billionaire, but only by letting thousands of children die. (To be fair, here’s a scholar arguing that he probably couldn’t have gotten the patent even if he wanted to—but going on to admit that even then the patent incentive had basically nothing to do with why penicillin and the polio vaccine were invented.)

Who landed on the moon? Hint: It wasn’t Microsoft. Who built the Hubble Space Telescope? Not Sony. The Internet that made Google and Facebook possible was originally invented by DARPA. Even when corporations seem to do useful innovation, it’s usually by profiting from the work of individuals: Edison’s corporation stole most of its good ideas from Nikola Tesla, and by the time the Wright Brothers founded a company their most important work was already done (though at least then you could argue that they did it in order to later become rich, which they ultimately did). Universities and nonprofits brought you the laser, light-emitting diodes, fiber optics, penicillin and the polio vaccine. Governments brought you liquid-fuel rockets, the Internet, GPS, and the microchip. Corporations brought you, uh… Viagra, the Snuggie, and Furbies. Indeed, even Google’s vaunted search algorithms were originally developed by the NSF. I can think of literally zero examples of a world-changing technology that was actually invented by a corporation in order to secure a patent. I’m hesitant to say that none exist, but clearly the vast majority of seminal inventions have been created by governments and universities.

This has always been true throughout history. Rome’s fire departments were notorious for shoddy service—and wholly privately-owned—but their great aqueducts that still stand today were built as government projects. When China invented paper, turned it into money, and defended it with the Great Wall, it was all done on government funding.

The whole idea that patents are necessary for innovation is simply a lie; and even the idea that patents lead to more innovation is quite hard to defend. Imagine if instead of letting Google and Facebook patent their technology all the money they receive in patent rents were instead turned into tax-funded research—frankly is there even any doubt that the results would be better for the future of humanity? Instead of better ad-targeting algorithms we could have had better cancer treatments, or better macroeconomic models, or better spacecraft engines.

When they feel their “intellectual property” (stop and think about that phrase for awhile, and it will begin to seem nonsensical) has been violated, corporations become indignant about “free-riding”; but who is really free-riding here? The people who copy music albums for free—because they cost nothing to copy, or the corporations who make hundreds of billions of dollars selling zero-marginal-cost products using government-invented technology over government-funded infrastructure? (Many of these companies also continue receive tens or hundreds of millions of dollars in subsidies every year.) In the immortal words of Barack Obama, “you didn’t build that!”

Strangely, most economists seem to be supportive of patents, despite the fact that their own neoclassical models point strongly in the opposite direction. There’s no logical connection between the fixed cost of inventing a technology and the monopoly rents that can be extracted from its patent. There is some connection—albeit a very weak one—between the benefits of the technology and its monopoly profits, since people are likely to be willing to pay more for more beneficial products. But most of the really great benefits are either in the form of public goods that are unenforceable even with patents (go ahead, try enforcing on that satellite telescope on everyone who benefits from its astronomical discoveries!) or else apply to people who are so needy they can’t possibly pay you (like anti-malaria drugs in Africa), so that willingness-to-pay link really doesn’t get you very far.

I guess a lot of neoclassical economists still seem to believe that willingness-to-pay is actually a good measure of utility, so maybe that’s what’s going on here; if it were, we could at least say that patents are a second-best solution to incentivizing the most important research.

But even then, why use second-best when you have best? Why not devote more of our society’s resources to governments and universities that have centuries of superior track record in innovation? When this is proposed the deadweight loss of taxation is always brought up, but somehow the deadweight loss of monopoly rents never seems to bother anyone. At least taxes can be designed to minimize deadweight loss—and democratic governments actually have incentives to do that; corporations have no interest whatsoever in minimizing the deadweight loss they create so long as their profit is maximized.

I’m not saying we shouldn’t have corporations at all—they are very good at one thing and one thing only, and that is manufacturing physical goods. Cars and computers should continue to be made by corporations—but their technologies are best invented by government. Will this dramatically reduce the profits of corporations? Of course—but I have difficulty seeing that as anything but a good thing.

Why am I talking so much about patents, when I said the topic was robots? Well, it’s typically because of the way these patents are assigned that robots taking people’s jobs becomes a bad thing. The patent is owned by the company, which is owned by the shareholders; so when the company makes more money by using robots instead of workers, the workers lose.

If when a robot takes your job, you simply received the income produced by the robot as capital income, you’d probably be better off—you get paid more and you also don’t have to work. (Of course, if you define yourself by your career or can’t stand the idea of getting “handouts”, you might still be unhappy losing your job even though you still get paid for it.)

There’s a subtler problem here though; robots could have a comparative advantage without having an absolute advantage—that is, they could produce less than the workers did before, but at a much lower cost. Where it cost $5 million in wages to produce $10 million in products, it might cost only $3 million in robot maintenance to produce $9 million in products. Hence you can’t just say that we should give the extra profits to the workers; in some cases those extra profits only exist because we are no longer paying the workers.

As a society, we still want those transactions to happen, because producing less at lower cost can still make our economy more efficient and more productive than it was before. Those displaced workers can—in theory at least—go on to other jobs where they are needed more.

The problem is that this often doesn’t happen, or it takes such a long time that workers suffer in the meantime. Hence the Luddites; they don’t want to be made obsolete even if it does ultimately make the economy more productive.

But this is where patents become important. The robots were probably invented at a university, but then a corporation took them and patented them, and is now selling them to other corporations at a monopoly price. The manufacturing company that buys the robots now has to spend more in order to use the robots, which drives their profits down unless they stop paying their workers.

If instead those robots were cheap because there were no patents and we were only paying for the manufacturing costs, the workers could be shareholders in the company and the increased efficiency would allow both the employers and the workers to make more money than before.

What if we don’t want to make the workers into shareholders who can keep their shares after they leave the company? There is a real downside here, which is that once you get your shares, why stay at the company? We call that a “golden parachute” when CEOs do it, which they do all the time; but most economists are in favor of stock-based compensation for CEOs, and once again I’m having trouble seeing why it’s okay when rich people do it but not when middle-class people do.

Another alternative would be my favorite policy, the basic income: If everyone knows they can depend on a basic income, losing your job to a robot isn’t such a terrible outcome. If the basic income is designed to grow with the economy, then the increased efficiency also raises everyone’s standard of living, as economic growth is supposed to do—instead of simply increasing the income of the top 0.01% and leaving everyone else where they were. (There is a good reason not to make the basic income track economic growth too closely, namely the business cycle; you don’t want the basic income payments to fall in a recession, because that would make the recession worse. Instead they should be smoothed out over multiple years or designed to follow a nominal GDP target, so that they continue to rise even in a recession.)

We could also combine this with expanded unemployment insurance (explain to me again why you can’t collect unemployment if you weren’t working full-time before being laid off, even if you wanted to be or you’re a full-time student?) and active labor market policies that help people re-train and find new and better jobs. These policies also help people who are displaced for reasons other than robots making their jobs obsolete—obviously there are all sorts of market conditions that can lead to people losing their jobs, and many of these we actually want to happen, because they involve reallocating the resources of our society to more efficient ends.

Why aren’t these sorts of policies on the table? I think it’s largely because we don’t think of it in terms of distributing goods—we think of it in terms of paying for labor. Since the worker is no longer laboring, why pay them?

This sounds reasonable at first, but consider this: Why give that money to the shareholder? What did they do to earn it? All they do is own a piece of the company. They may not have contributed to the goods at all. Honestly, on a pay-for-work basis, we should be paying the robot!

If it bothers you that the worker collects dividends even when he’s not working—why doesn’t it bother you that shareholders do exactly the same thing? By definition, a shareholder is paid according to what they own, not what they do. All this reform would do is make workers into owners.

If you justify the shareholder’s wealth by his past labor, again you can do exactly the same to justify worker shares. (And as I said above, if you’re worried about the moral hazard of workers collecting shares and leaving, you should worry just as much about golden parachutes.)

You can even justify a basic income this way: You paid taxes so that you could live in a society that would protect you from losing your livelihood—and if you’re just starting out, your parents paid those taxes and you will soon enough. Theoretically there could be “welfare queens” who live their whole lives on the basic income, but empirical data shows that very few people actually want to do this, and when given opportunities most people try to find work. Indeed, even those who don’t, rarely seem to be motivated by greed (even though, capitalists tell us, “greed is good”); instead they seem to be de-motivated by learned helplessness after trying and failing for so long. They don’t actually want to sit on the couch all day and collect welfare payments; they simply don’t see how they can compete in the modern economy well enough to actually make a living from work.

One thing is certain: We need to detach income from labor. As a society we need to get over the idea that a human being’s worth is decided by the amount of work they do for corporations. We need to get over the idea that our purpose in life is a job, a career, in which our lives are defined by the work we do that can be neatly monetized. (I admit, I suffer from the same cultural blindness at times, feeling like a failure because I can’t secure the high-paying and prestigious employment I want. I feel this clear sense that my society does not value me because I am not making money, and it damages my ability to value myself.)

As robots do more and more of our work, we will need to redefine the way we live by something else, like play, or creativity, or love, or compassion. We will need to learn to see ourselves as valuable even if nothing we do ever sells for a penny to anyone else.

A basic income can help us do that; it can redefine our sense of what it means to earn money. Instead of the default being that you receive nothing because you are worthless unless you work, the default is that you receive enough to live on because you are a human being of dignity and a citizen. This is already the experience of people who have substantial amounts of capital income; they can fall back on their dividends if they ever can’t or don’t want to find employment. A basic income would turn us all into capital owners, shareholders in the centuries of established capital that has been built by our forebears in the form of roads, schools, factories, research labs, cars, airplanes, satellites, and yes—robots.