Nov 20, JDN 2457683

If there’s one message we can take from the election of Donald Trump, it is that bigotry remains a powerful force in our society. A lot of autoflagellating liberals have been trying to explain how this election result really reflects our failure to help people displaced by technology and globalization (despite the fact that personal income and local unemployment had negligible correlation with voting for Trump), or Hillary Clinton’s “bad campaign” that nonetheless managed the same proportion of Democrat turnout that re-elected her husband in 1996.

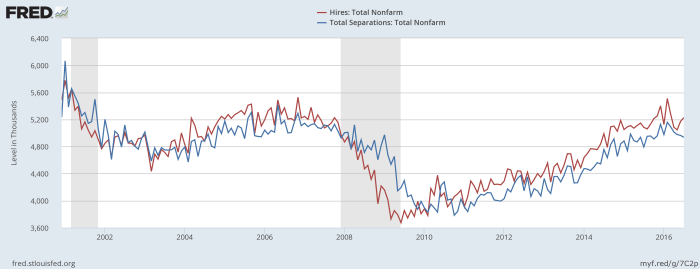

No, overwhelmingly, the strongest predictor of voting for Trump was being White, and living in an area where most people are White. (Well, actually, that’s if you exclude authoritarianism as an explanatory variable—but really I think that’s part of what we’re trying to explain.) Trump voters were actually concentrated in areas less affected by immigration and globalization. Indeed, there is evidence that these people aren’t racist because they have anxiety about the economy—they are anxious about the economy because they are racist. How does that work? Obama. They can’t believe that the economy is doing well when a Black man is in charge. So all the statistics and even personal experiences mean nothing to them. They know in their hearts that unemployment is rising, even as the BLS data clearly shows it’s falling.

The wide prevalence and enormous power of bigotry should be obvious. But economists rarely talk about it, and I think I know why: Their models say it shouldn’t exist. The free market is supposed to automatically eliminate all forms of bigotry, because they are inefficient.

The argument for why this is supposed to happen actually makes a great deal of sense: If a company has the choice of hiring a White man or a Black woman to do the same job, but they know that the market wage for Black women is lower than the market wage for White men (which it most certainly is), and they will do the same quality and quantity of work, why wouldn’t they hire the Black woman? And indeed, if human beings were rational profit-maximizers, this is probably how they would think.

More recently some neoclassical models have been developed to try to “explain” this behavior, but always without daring to give up the precious assumption of perfect rationality. So instead we get the two leading neoclassical theories of discrimination, which are statistical discrimination and taste-based discrimination.

Statistical discrimination is the idea that under asymmetric information (and we surely have that), features such as race and gender can act as signals of quality because they are correlated with actual quality for various reasons (usually left unspecified), so it is not irrational after all to choose based upon them, since they’re the best you have.

Taste-based discrimination is the idea that people are rationally maximizing preferences that simply aren’t oriented toward maximizing profit or well-being. Instead, they have this extra term in their utility function that says they should also treat White men better than women or Black people. It’s just this extra thing they have.

A small number of studies have been done trying to discern which of these is at work.

The correct answer, of course, is neither.

Statistical discrimination, at least, could be part of what’s going on. Knowing that Black people are less likely to be highly educated than Asians (as they definitely are) might actually be useful information in some circumstances… then again, you list your degree on your resume, don’t you? Knowing that women are more likely to drop out of the workforce after having a child could rationally (if coldly) affect your assessment of future productivity. But shouldn’t the fact that women CEOs outperform men CEOs be incentivizing shareholders to elect women CEOs? Yet that doesn’t seem to happen. Also, in general, people seem to be pretty bad at statistics.

The bigger problem with statistical discrimination as a theory is that it’s really only part of a theory. It explains why not all of the discrimination has to be irrational, but some of it still does. You need to explain why there are these huge disparities between groups in the first place, and statistical discrimination is unable to do that. In order for the statistics to differ this much, you need a past history of discrimination that wasn’t purely statistical.

Taste-based discrimination, on the other hand, is not a theory at all. It’s special pleading. Rather than admit that people are failing to rationally maximize their utility, we just redefine their utility so that whatever they happen to be doing now “maximizes” it.

This is really what makes the Axiom of Revealed Preference so insidious; if you really take it seriously, it says that whatever you do, must by definition be what you preferred. You can’t possibly be irrational, you can’t possibly be making mistakes of judgment, because by definition whatever you did must be what you wanted. Maybe you enjoy bashing your head into a wall, who am I to judge?

I mean, on some level taste-based discrimination is what’s happening; people think that the world is a better place if they put women and Black people in their place. So in that sense, they are trying to “maximize” some “utility function”. (By the way, most human beings behave in ways that are provably inconsistent with maximizing any well-defined utility function—the Allais Paradox is a classic example.) But the whole framework of calling it “taste-based” is a way of running away from the real explanation. If it’s just “taste”, well, it’s an unexplainable brute fact of the universe, and we just need to accept it. If people are happier being racist, what can you do, eh?

So I think it’s high time to start calling it what it is. This is not a question of taste. This is a question of tribal instinct. This is the product of millions of years of evolution optimizing the human brain to act in the perceived interest of whatever it defines as its “tribe”. It could be yourself, your family, your village, your town, your religion, your nation, your race, your gender, or even the whole of humanity or beyond into all sentient beings. But whatever it is, the fundamental tribe is the one thing you care most about. It is what you would sacrifice anything else for.

And what we learned on November 9 this year is that an awful lot of Americans define their tribe in very narrow terms. Nationalistic and xenophobic at best, racist and misogynistic at worst.

But I suppose this really isn’t so surprising, if you look at the history of our nation and the world. Segregation was not outlawed in US schools until 1955, and there are women who voted in this election who were born before American women got the right to vote in 1920. The nationalistic backlash against sending jobs to China (which was one of the chief ways that we reduced global poverty to its lowest level ever, by the way) really shouldn’t seem so strange when we remember that over 100,000 Japanese-Americans were literally forcibly relocated into camps as recently as 1942. The fact that so many White Americans seem all right with the biases against Black people in our justice system may not seem so strange when we recall that systemic lynching of Black people in the US didn’t end until the 1960s.

The wonder, in fact, is that we have made as much progress as we have. Tribal instinct is not a strange aberration of human behavior; it is our evolutionary default setting.

Indeed, perhaps it is unreasonable of me to ask humanity to change its ways so fast! We had millions of years to learn how to live the wrong way, and I’m giving you only a few centuries to learn the right way?

The problem, of course, is that the pace of technological change leaves us with no choice. It might be better if we could wait a thousand years for people to gradually adjust to globalization and become cosmopolitan; but climate change won’t wait a hundred, and nuclear weapons won’t wait at all. We are thrust into a world that is changing very fast indeed, and I understand that it is hard to keep up; but there is no way to turn back that tide of change.

Yet “turn back the tide” does seem to be part of the core message of the Trump voter, once you get past the racial slurs and sexist slogans. People are afraid of what the world is becoming. They feel that it is leaving them behind. Coal miners fret that we are leaving them behind by cutting coal consumption. Factory workers fear that we are leaving them behind by moving the factory to China or inventing robots to do the work in half the time for half the price.

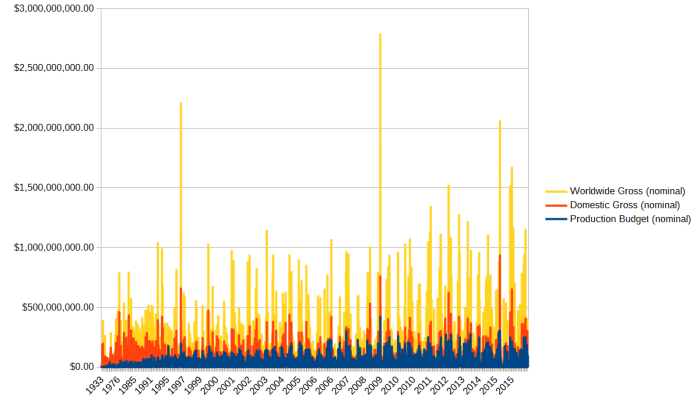

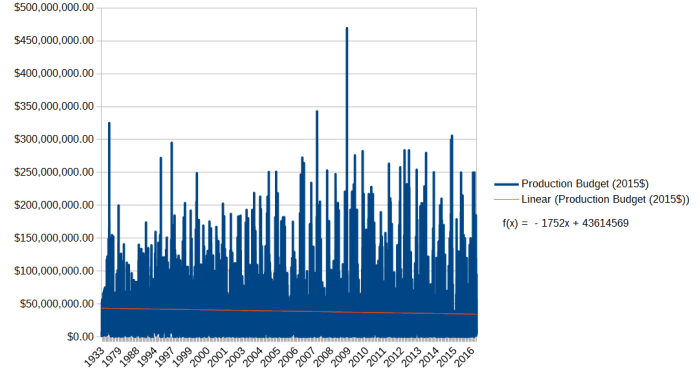

And truth be told, they are not wrong about this. We are leaving them behind. Because we have to. Because coal is polluting our air and destroying our climate, we must stop using it. Moving the factories to China has raised them out of the most dire poverty, and given us a fighting chance toward ending world hunger. Inventing the robots is only the next logical step in the process that has carried humanity forward from the squalor and suffering of primitive life to the security and prosperity of modern society—and it is a step we must take, for the progress of civilization is not yet complete.

They wouldn’t have to let themselves be left behind, if they were willing to accept our help and learn to adapt. That carbon tax that closes your coal mine could also pay for your basic income and your job-matching program. The increased efficiency from the automated factories could provide an abundance of wealth that we could redistribute and share with you.

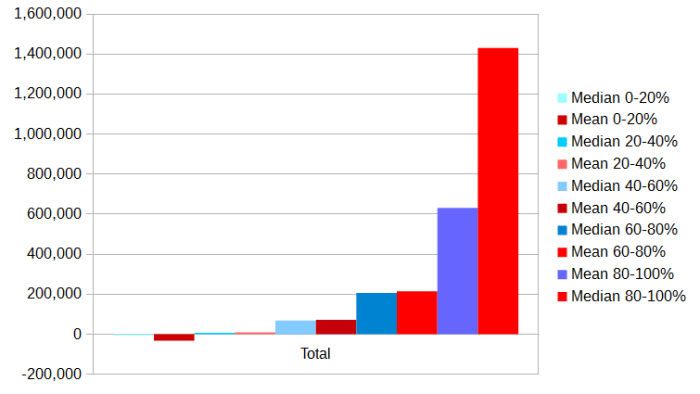

But this would require them to rethink their view of the world. They would have to accept that climate change is a real threat, and not a hoax created by… uh… never was clear on that point actually… the Chinese maybe? But 45% of Trump supporters don’t believe in climate change (and that’s actually not as bad as I’d have thought). They would have to accept that what they call “socialism” (which really is more precisely described as social democracy, or tax-and-transfer redistribution of wealth) is actually something they themselves need, and will need even more in the future. But despite rising inequality, redistribution of wealth remains fairly unpopular in the US, especially among Republicans.

Above all, it would require them to redefine their tribe, and start listening to—and valuing the lives of—people that they currently do not.

Perhaps we need to redefine our tribe as well; many liberals have argued that we mistakenly—and dangerously—did not include people like Trump voters in our tribe. But to be honest, that rings a little hollow to me: We aren’t the ones threatening to deport people or ban them from entering our borders. We aren’t the ones who want to build a wall (though some have in fact joked about building a wall to separate the West Coast from the rest of the country, I don’t think many people really want to do that). Perhaps we live in a bubble of liberal media? But I make a point of reading outlets like The American Conservative and The National Review for other perspectives (I usually disagree, but I do at least read them); how many Trump voters do you think have ever read the New York Times, let alone Huffington Post? Cosmopolitans almost by definition have the more inclusive tribe, the more open perspective on the world (in fact, do I even need the “almost”?).

Nor do I think we are actually ignoring their interests. We want to help them. We offer to help them. In fact, I want to give these people free money—that’s what a basic income would do, it would take money from people like me and give it to people like them—and they won’t let us, because that’s “socialism”! Rather, we are simply refusing to accept their offered solutions, because those so-called “solutions” are beyond unworkable; they are absurd, immoral and insane. We can’t bring back the coal mining jobs, unless we want Florida underwater in 50 years. We can’t reinstate the trade tariffs, unless we want millions of people in China to starve. We can’t tear down all the robots and force factories to use manual labor, unless we want to trigger a national—and then global—economic collapse. We can’t do it their way. So we’re trying to offer them another way, a better way, and they’re refusing to take it. So who here is ignoring the concerns of whom?

Of course, the fact that it’s really their fault doesn’t solve the problem. We do need to take it upon ourselves to do whatever we can, because, regardless of whose fault it is, the world will still suffer if we fail. And that presents us with our most difficult task of all, a task that I fully expect to spend a career trying to do and yet still probably failing: We must understand the human tribal instinct well enough that we can finally begin to change it. We must know enough about how human beings form their mental tribes that we can actually begin to shift those parameters. We must, in other words, cure bigotry—and we must do it now, for we are running out of time.