Jul 20 JDN 2460877

It was a minor epiphany for me when I learned, over the course of studying economics, that price-matching policies, while they seem like they benefit consumers, actually are a brilliant strategy for maintaining tacit collusion.

Consider a (Bertrand) market, with some small number n of firms in it.

Each firm announces a price, and then customers buy from whichever firm charges the lowest price. Firms can produce as much as they need to in order to meet this demand. (This makes the most sense for a service industry rather than as literal manufactured goods.)

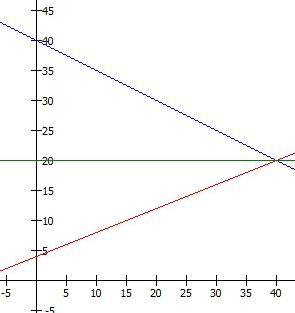

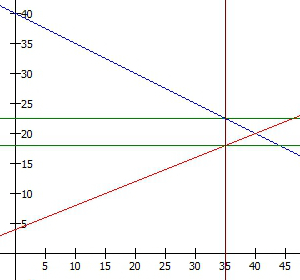

In Nash equilibrium, all firms will charge the same price, because anyone who charged more would sell nothing. But what will that price be?

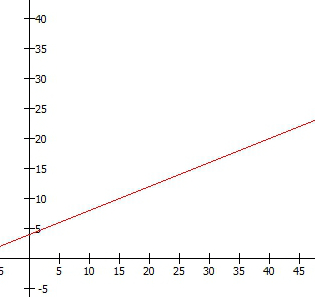

In the absence of price-matching, it will be just above the marginal cost of the service. Otherwise, it would be advantageous to undercut all the other firms by charging slightly less, and you could still make a profit. So the equilibrium price is basically the same as it would be in a perfectly-competitive market.

But now consider what happens if the firms can announce a price-matching policy.

If you were already planning on buying from firm 1 at price P1, and firm 2 announces that you can buy from them at some lower price P2, then you still have no reason to switch to firm 2, because you can still get price P2 from firm 1 as long as you show them the ad from the other firm. Under the very reasonable assumption that switching firms carries some cost (if nothing else, the effort of driving to a different store), people won’t switch—which means that any undercut strategy will fail.

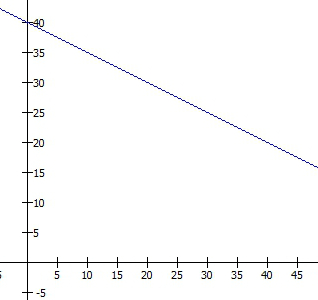

Now, firms don’t need to set such low prices! They can set a much higher price, confident that if any other firm tries to undercut them, it won’t actually work—and thus, no one will try to undercut them. The new Nash equilibrium is now for the firms to charge the monopoly price.

In the real world, it’s a bit more complicated than that; for various reasons they may not actually be able to sustain collusion at the monopoly price. But there is considerable evidence that price-matching schemes do allow firms to charge a higher price than they would in perfect competition. (Though the literature is not completely unanimous; there are a few who argue that price-matching doesn’t actually facilitate collusion—but they are a distinct minority.)

Thus, a policy that on its face seems like it’s helping consumers by giving them lower prices actually ends up hurting them by giving them higher prices.

Now I want to turn things around and consider the labor market.

What would price-matching look like in the labor market?

It would mean that whenever you are offered a higher wage at a different firm, you can point this out to the firm you are currently working at, and they will offer you a raise to that new wage, to keep you from leaving.

That sounds like a thing that happens a lot.

Indeed, pretty much the best way to get a raise, almost anywhere you may happen to work, is to show your employer that you have a better offer elsewhere. It’s not the only way to get a raise, and it doesn’t always work—but it’s by far the most reliable way, because it usually works.

This for me was another minor epiphany:

The entire labor market is full of tacit collusion.

The very fact that firms can afford to give you a raise when you have an offer elsewhere basically proves that they weren’t previously paying you all that you were worth. If they had actually been paying you your value of marginal product as they should in a competitive labor market, then when you showed them a better offer, they would say: “Sorry, I can’t afford to pay you any more; good luck in your new job!”

This is not a monopoly price but a monopsonyprice (or at least something closer to it); people are being systematically underpaid so that their employers can make higher profits.

And since the phenomenon of wage-matching is so ubiquitous, it looks like this is happening just about everywhere.

This simple model doesn’t tell us how much higher wages would be in perfect competition. It could be a small difference, or a large one. (It likely varies by industry, in fact.) But the simple fact that nearly every employer engages in wage-matching implies that nearly every employer is in fact colluding on the labor market.

This also helps explain another phenomenon that has sometimes puzzled economists: Why doesn’t raising the minimum wage increase unemployment? Well, it absolutely wouldn’t, if all the firms paying minimum wage are colluding in the labor market! And we already knew that most labor markets were shockingly concentrated.

What should be done about this?

Now there we have a thornier problem.

I actually think we could implement a law against price-matching on product and service markets relatively easily, since these are generally applied to advertised prices.

But a law against wage-matching would be quite tricky indeed. Wages are generally not advertised—a problem unto itself—and we certainly don’t want to ban raises in general.

Maybe what we should actually do is something like this: Offer a cash bonus (refundable tax credit?) to anyone who changes jobs in order to get a higher wage. Make this bonus large enough to offset the costs of switching jobs—which are clearly substantial. Then, the “undercut” (“overcut”?) strategy will become more effective; employers will have an easier time poaching workers from each other, and a harder time sustaining collusive wages.

Businesses would of course hate this policy, and lobby heavily against it. This is precisely the reaction we should expect if they are relying upon collusion to sustain their profits.