Dec 30 JDN 2458483

One thing that endlessly frustrates me (and probably most economists) about the public conversation on economics is the fact that people seem to think “destroying jobs” is bad. Indeed, not simply a downside to be weighed, but a knock-down argument: If something “destroys jobs”, that’s a sufficient reason to opposite it, whether it be a new technology, an environmental regulation, or a trade agreement. So then we tie ourselves up in knots trying to argue that the policy won’t really destroy jobs, or it will create more than it destroys—but it will destroy jobs, and we don’t actually know how many it will create.

Destroying jobs is good. Destroying jobs is the only way that economic growth ever happens.

I realize I’m probably fighting an uphill battle here, so let me start at the beginning: What do I mean when I say “destroying jobs”? What exactly is a “job”, anyway?

At its most basic level, a job is something that needs done. It’s a task that someone wants to perform, but is unwilling or unable to perform on their own, and is therefore willing to give up some of what they have in order to get someone else to do it for them.

Capitalism has blinded us to this basic reality. We have become so accustomed to getting the vast majority of our goods via jobs that we come to think of having a job as something intrinsically valuable. It is not. Working at a job is a downside. It is something to be minimized.

There is a kind of work that is valuable: Creative, fulfilling work that you do for the joy of it. This is what we are talking about when we refer to something as a “vocation” or even a “hobby”. Whether it’s building ships in bottles, molding things from polymer clay, or coding video games for your friends, there is a lot of work in the world that has intrinsic value. But these things aren’t jobs. No one will pay them to do these things—or need to; you’ll do them anyway.

The value we get from jobs is actually obtained from goods: Everything from houses to underwear to televisions to antibiotics. The reason you want to have a job is that you want the money from that job to give you access to markets for all the goods that are actually valuable to you.

Jobs are the input—the cost—of producing all of those goods. The more jobs it takes to make a good, the more expensive that good is. This is not a rule-of-thumb statement of what usually or typically occurs. This is the most fundamental definition of cost. The more people you have to pay to do something, the harder it was to do that thing. If you can do it with fewer people (or the same people working with less effort), you should. Money is the approximation; money is the rule-of-thumb. We use money as an accounting mechanism to keep track of how much effort was put into accomplishing something. But what really matters is the “sweat of our laborers, the genius of our scientists, the hopes of our children”.

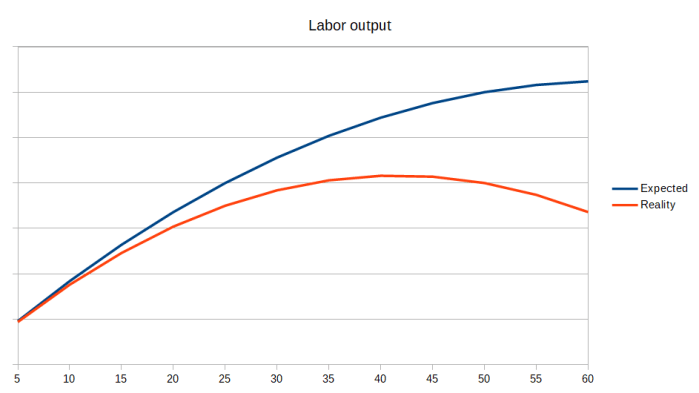

Economic growth means that we produce more goods at less cost.

That is, we produce more goods with fewer jobs.

All new technologies destroy jobs—if they are worth anything at all. The entire purpose of a new technology is to let us do things faster, better, easier—to let us have more things with less work.

This has been true since at least the dawn of the Industrial Revolution.

The Luddites weren’t wrong that automated looms would destroy weaver jobs. They were wrong to think that this was a bad thing. Of course, they weren’t crazy. Their livelihoods were genuinely in jeopardy. And this brings me to what the conversation should be about when we instead waste time talking about “destroying jobs”.

Here’s a slogan for you: Kill the jobs. Save the workers.

We shouldn’t be disappointed to lose a job; we should think of that as an opportunity to give a worker a better life. For however many years, you’ve been toiling to do this thing; well, now it’s done. As a civilization, we have finally accomplished the task that you and so many others set out to do. We have not “replaced you with a machine”; we have built a machine that now frees you from your toil and allows you to do something better with your life. Your purpose in life wasn’t to be a weaver or a coal miner or a steelworker; it was to be a friend and a lover and a parent. You can now get more chance to do the things that really matter because you won’t have to spend all your time working some job.

When we replaced weavers with looms, plows with combine harvesters, computers-the-people with computers-the-machines (a transformation now so complete most people don’t even seem to know that the word used to refer to a person—the award-winning film Hidden Figures is about computers-the-people), tollbooth operators with automated transponders—all these things meant that the job was now done. For the first time in the history of human civilization, nobody had to do that job anymore. Think of how miserable life is for someone pushing a plow or sitting in a tollbooth for 10 hours a day; aren’t you glad we don’t have to do that anymore (in this country, anyway)?

And the same will be true if we replace radiologists with AI diagnostic algorithms (we will; it’s probably not even 10 years away), or truckers with automated trucks (we will; I give it 20 years), or cognitive therapists with conversational AI (we might, but I’m more skeptical), or construction workers with building-printers (we probably won’t anytime soon, but it would be nice), the same principle applies: This is something we’ve finally accomplished as a civilization. We can check off the box on our to-do list and move on to the next thing.

But we shouldn’t simply throw away the people who were working on that noble task as if they were garbage. Their job is done—they did it well, and they should be rewarded. Yes, of course, the people responsible for performing the automation should be rewarded: The engineers, programmers, technicians. But also the people who were doing the task in the meantime, making sure that the work got done while those other people were spending all that time getting the machine to work: They should be rewarded too.

Losing your job to a machine should be the best thing that ever happened to you. You should still get to receive most of your income, and also get the chance to find a new job or retire.

How can such a thing be economically feasible? That’s the whole point: The machines are more efficient. We have more stuff now. That’s what economic growth is. So there’s literally no reason we can’t give every single person in the world at least as much wealth as we did before—there is now more wealth.

There’s a subtler argument against this, which is that diverting some of the surplus of automation to the workers who get displaced would reduce the incentives to create automation. This is true, so far as it goes. But you know what else reduces the incentives to create automation? Political opposition. Luddism. Naive populism. Trade protectionism.

Moreover, these forces are clearly more powerful, because they attack the opportunity to innovate: Trade protection can make it illegal to share knowledge with other countries. Luddist policies can make it impossible to automate a factory.

Whereas, sharing the wealth would only reduce the incentive to create automation; it would still be possible, simply less lucrative. Instead of making $40 billion, you’d only make $10 billion—you poor thing. I sincerely doubt there is a single human being on Earth with a meaningful contribution to make to humanity who would make that contribution if they were paid $40 billion but not if they were only paid $10 billion.

This is something that could be required by regulation, or negotiated into labor contracts. If your job is eliminated by automation, for the next year you get laid off but still paid your full salary. Then, your salary is converted into shares in the company that are projected to provide at least 50% of your previous salary in dividends—forever. By that time, you should be able to find another job, and as long as it pays at least half of what your old job did, you will be better off. Or, you can retire, and live off that 50% plus whatever else you were getting as a pension.

From the perspective of the employer, this does make automation a bit less attractive: The up-front cost in the first year has been increased by everyone’s salary, and the long-term cost has been increased by all those dividends. Would this reduce the number of jobs that get automated, relative to some imaginary ideal? Sure. But we don’t live in that ideal world anyway; plenty of other obstacles to innovation were in the way, and by solving the political conflict, this will remove as many as it adds. We might actually end up with more automation this way; and even if we don’t, we will certainly end up with less political conflict as well as less wealth and income inequality.