JDN 2457437

I often talk about the importance of externalities—a full discussion in this earlier post, and one of their important implications, the tragedy of the commons, in another. Briefly, externalities are consequences of actions incurred upon people who did not perform those actions. Anything I do affecting you that you had no say in, is an externality.

Usually I’m talking about how we want to internalize externalities, meaning that we set up a system of incentives to make it so that the consequences fall upon the people who chose the actions instead of anyone else. If you pollute a river, you should have to pay to clean it up. If you assault someone, you should serve jail time as punishment. If you invent a new technology, you should be rewarded for it. These are all attempts to internalize externalities.

But today I’m going to push back a little, and ask whether we really always want to internalize externalities. If you think carefully, it’s not hard to come up with scenarios where it actually seems fairer to leave the externality in place, or perhaps reduce it somewhat without eliminating it.

For example, suppose indeed that someone invents a great new technology. To be specific, let’s think about Jonas Salk, inventing the polio vaccine. This vaccine saved the lives of thousands of people and saved millions more from pain and suffering. Its value to society is enormous, and of course Salk deserved to be rewarded for it.

But we did not actually fully internalize the externality. If we had, every family whose child was saved from polio would have had to pay Jonas Salk an amount equal to what they saved on medical treatments as a result, or even an amount somehow equal to the value of their child’s life (imagine how offended people would get if you asked that on a survey!). Those millions of people spared from suffering would need to each pay, at minimum, thousands of dollars to Jonas Salk, making him of course a billionaire.

And indeed this is more or less what would have happened, if he had been willing and able to enforce a patent on the vaccine. The inability of some to pay for the vaccine at its monopoly prices would add some deadweight loss, but even that could be removed if Salk Industries had found a way to offer targeted price vouchers that let them precisely price-discriminate so that every single customer paid exactly what they could afford to pay. If that had happened, we would have fully internalized the externality and therefore maximized economic efficiency.

But doesn’t that sound awful? Doesn’t it sound much worse than what we actually did, where Jonas Salk received a great deal of funding and support from governments and universities, and lived out his life comfortably upper-middle class as a tenured university professor?

Now, perhaps he should have been awarded a Nobel Prize—I take that back, there’s no “perhaps” about it, he definitely should have been awarded a Nobel Prize in Medicine, it’s absurd that he did not—which means that I at least do feel the externality should have been internalized a bit more than it was. But a Nobel Prize is only 10 million SEK, about $1.1 million. That’s about enough to be independently wealthy and live comfortably for the rest of your life; but it’s a small fraction of the roughly $7 billion he could have gotten if he had patented the vaccine. Yet while the possible world in which he wins a Nobel is better than this one, I’m fairly well convinced that the possible world in which he patents the vaccine and becomes a billionaire is considerably worse.

Internalizing externalities makes sense if your goal is to maximize total surplus (a concept I explain further in the linked post), but total surplus is actually a terrible measure of human welfare.

Total surplus counts every dollar of willingness-to-pay exactly the same across different people, regardless of whether they live on $400 per year or $4 billion.

It also takes no account whatsoever of how wealth is distributed. Suppose a new technology adds $10 billion in wealth to the world. As far as total surplus, it makes no difference whether that $10 billion is spread evenly across the entire planet, distributed among a city of a million people, concentrated in a small town of 2,000, or even held entirely in the bank account of a single man.

Particularly a propos of the Salk example, total surplus makes no distinction between these two scenarios: a perfectly-competitive market where everything is sold at a fair price, and a perfectly price-discriminating monopoly, where everything is sold at the very highest possible price each person would be willing to pay.

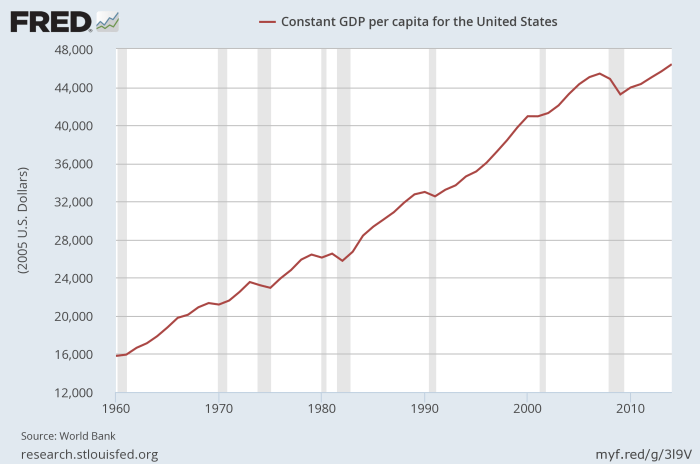

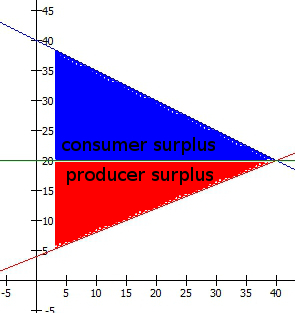

This is a perfectly-competitive market, where the benefits are more or less equally (in this case exactly equally, but that need not be true in real life) between sellers and buyers:

This is a perfectly price-discriminating monopoly, where the benefits accrue entirely to the corporation selling the good:

In the former case, the company profits, consumers are better off, everyone is happy. In the latter case, the company reaps all the benefits and everyone else is left exactly as they were. In real terms those are obviously very different outcomes—the former being what we want, the latter being the cyberpunk dystopia we seem to be hurtling mercilessly toward. But in terms of total surplus, and therefore the kind of “efficiency” that is maximize by internalizing all externalities, they are indistinguishable.

In fact (as I hope to publish a paper about at some point), the way willingness-to-pay works, it weights rich people more. Redistributing goods from the poor to the rich will typically increase total surplus.

Here’s an example. Suppose there is a cake, which is sufficiently delicious that it offers 2 milliQALY in utility to whoever consumes it (this is a truly fabulous cake). Suppose there are two people to whom we might give this cake: Richie, who has $10 million in annual income, and Hungry, who has only $1,000 in annual income. How much will each of them be willing to pay?

Well, assuming logarithmic marginal utility of wealth (which is itself probably biasing slightly in favor of the rich), 1 milliQALY is about $1 to Hungry, so Hungry will be willing to pay $2 for the cake. To Richie, however, 1 milliQALY is about $10,000; so he will be willing to pay a whopping $20,000 for this cake.

What this means is that the cake will almost certainly be sold to Richie; and if we proposed a policy to redistribute the cake from Richie to Hungry, economists would emerge to tell us that we have just reduced total surplus by $19,998 and thereby committed a great sin against economic efficiency. They will cajole us into returning the cake to Richie and thus raising total surplus by $19,998 once more.

This despite the fact that I stipulated that the cake is worth just as much in real terms to Hungry as it is to Richie; the difference is due to their wildly differing marginal utility of wealth.

Indeed, it gets worse, because even if we suppose that the cake is worth much more in real utility to Hungry—because he is in fact hungry—it can still easily turn out that Richie’s willingness-to-pay is substantially higher. Suppose that Hungry actually gets 20 milliQALY out of eating the cake, while Richie still only gets 2 milliQALY. Hungry’s willingness-to-pay is now $20, but Richie is still going to end up with the cake.

Now, if your thought is, “Why would Richie pay $20,000, when he can go to another store and get another cake that’s just as good for $20?” Well, he wouldn’t—but in the sense we mean for total surplus, willingness-to-pay isn’t just what you’d actually be willing to pay given the actual prices of the goods, but the absolute maximum price you’d be willing to pay to get that good under any circumstances. It is instead the marginal utility of the good divided by your marginal utility of wealth. In this sense the cake is “worth” $20,000 to Richie, and “worth” substantially less to Hungry—but not because it’s actually worth less in real terms, but simply because Richie has so much more money.

Even economists often equate these two, implicitly assuming that we are spending our money up to the point where our marginal willingness-to-pay is the actual price we choose to pay; but in general our willingness-to-pay is higher than the price if we are willing to buy the good at all. The consumer surplus we get from goods is in fact equal to the difference between willingness-to-pay and actual price paid, summed up over all the goods we have purchased.

Internalizing all externalities would definitely maximize total surplus—but would it actually maximize happiness? Probably not.

If you asked most people what their marginal utility of wealth is, they’d have no idea what you’re talking about. But most people do actually have an intuitive sense that a dollar is worth more to a homeless person than it is to a millionaire, and that’s really all we mean by diminishing marginal utility of wealth.

I think the reason we’re uncomfortable with the idea of Jonas Salk getting $7 billion from selling the polio vaccine, rather than the same number of people getting the polio vaccine and Jonas Salk only getting the $1.1 million from a Nobel Prize, is that we intuitively grasp that after that $1.1 million makes him independently wealthy, the rest of the money is just going to sit in some stock account and continue making even more money, while if we’d let the families keep it they would have put it to much better use raising their children who are now protected from polio. We do want to reward Salk for his great accomplishment, but we don’t see why we should keep throwing cash at him when it could obviously be spent in better ways.

And indeed I think this intuition is correct; great accomplishments—which is to say, large positive externalities—should be rewarded, but not in direct proportion. Maybe there should be some threshold above which we say, “You know what? You’re rich enough now; we can stop giving you money.” Or maybe it should simply damp down very quickly, so that a contribution which is worth $10 billion to the world pays only slightly more than one that is worth $100 million, but a contribution that is worth $100,000 pays considerably more than one which is only worth $10,000.

What it ultimately comes down to is that if we make all the benefits incur to the person who did it, there aren’t any benefits anymore. The whole point of Jonas Salk inventing the polio vaccine (or Einstein discovering relativity, or Darwin figuring out natural selection, or any great achievement) is that it will benefit the rest of humanity, preferably on to future generations. If you managed to fully internalize that externality, this would no longer be true; Salk and Einstein and Darwin would have become fabulously wealthy, and then somehow we’d all have to continue paying into their estates or something an amount equal to the benefits we received from their discoveries. (Every time you use your GPS, pay a royalty to the Einsteins. Every time you take a pill, pay a royalty to the Darwins.) At some point we’d probably get fed up and decide we’re no better off with them than without them—which is exactly by construction how we should feel if the externality were fully internalized.

Internalizing negative externalities is much less problematic—it’s your mess, clean it up. We don’t want other people to be harmed by your actions, and if we can pull that off that’s fantastic. (In reality, we usually can’t fully internalize negative externalities, but we can at least try.)

But maybe internalizing positive externalities really isn’t so great after all.